AI SaaS Idea Validation Checklist

A pre-build checklist for testing the buyer, the AI workflow, and the trust risks before you turn an AI SaaS idea into an MVP.

Quick Answer: AI SaaS Idea Validation Means Business Plus AI Feasibility

To validate an AI SaaS idea, first prove a reachable buyer has an urgent workflow problem, a current workaround, and willingness to pay. Then prove AI is necessary, the needed data is available and lawful to use, outputs can meet a defined quality threshold, failures have human review, token costs fit pricing, and buyers trust the workflow enough to test it.

AI SaaS idea validation is not just asking whether an AI product sounds useful. It is checking three layers before you build: commercial demand, AI feasibility, and buyer trust.

A working demo is not enough. The buyer still has to care, pay, share the required data, accept the output quality, and trust the workflow in a real business context. If the model looks impressive but the buyer would still redo the work manually, the idea is not ready for an MVP.

Use this checklist before building an MVP, pitching a paid pilot, or committing to a long AI workflow build. If your product is only built with AI coding tools, start with how to validate an app idea before building with AI. If the product itself depends on AI output quality, use the gates below.

What Makes AI SaaS Validation Different From SaaS Validation

Generic SaaS validation still matters. You still need a specific buyer, urgent pain, current alternatives, pricing logic, distribution access, MVP scope, and founder fit. For the broader business layer, use the guide on how to validate a SaaS idea before building and the SaaS idea validation checklist.

AI adds a second set of failure modes: nondeterministic outputs, hallucinations, bad data, unclear data rights, model dependency, latency, inference cost, compliance exposure, security risk, and workflow accountability.

The useful question is not "Can AI generate an answer?" It is: "Can this product reliably improve a paid workflow under real buyer constraints?"

That distinction matters because AI can make weak ideas look stronger. A chatbot can summarize, classify, and draft in a demo. A SaaS product has to keep doing that in the buyer's messy workflow, with their permissions, tools, edge cases, and tolerance for mistakes.

| Validation area | Generic SaaS question | AI SaaS question | Evidence needed before building |

|---|---|---|---|

| Buyer pain | Who has a repeated painful problem? | Who has a repeated workflow where AI could change the outcome? | Recent buyer stories, workflow walkthroughs, complaints, or cost of delay. |

| Current workaround | What do they use today? | What manual, software, outsourcing, or AI workaround already handles part of the job? | Alternative list, buyer workflow notes, competitor review patterns. |

| AI necessity | Is software useful here? | Is AI necessary, or would rules, templates, search, analytics, or CRUD solve it? | Clear reason AI must interpret, classify, generate, reason, or summarize. |

| Data access and rights | What inputs does the product need? | Can the buyer access, connect, and permit use of the data the model needs? | API/export path, owner permission, data-quality sample, rights screen. |

| Output quality threshold | What outcome must the product deliver? | What measurable quality bar makes the AI output usable? | Acceptance criteria, sample inputs, expected outputs, failure categories. |

| Human review | What happens when users need help? | Who reviews uncertain, sensitive, or high-impact AI outputs? | Review rules, escalation path, override behavior, audit trail. |

| Unit economics | Can pricing support acquisition and support? | Can pricing support model cost, latency, infrastructure, support, and retries? | Usage estimate, provider pricing check, gross-margin formula. |

| Trust and compliance | Can buyers adopt this safely? | Can buyers verify, control, and govern the AI workflow? | Source traceability, permissions, data handling clarity, expert review for sensitive uses. |

| Workflow integration | Does the MVP fit the job? | Does AI output land where the buyer decides, approves, routes, or acts? | Tool map, integration path, user acceptance criterion. |

| Differentiation | Why this product instead of alternatives? | Why is this more than a generic model API or "ChatGPT for X"? | Workflow ownership, data connectors, vertical expertise, evaluation loop, distribution edge. |

YC's startup advice still applies: launch, talk to users, and iterate from customer feedback. Paul Graham's "Do Things That Don't Scale" makes the early user-learning point even more directly: founders often need to recruit and serve users manually before scaling a process. For AI SaaS, "manual first" can mean a concierge workflow where a human checks the model output before any automation is trusted.

Editor's note from Malte: AI SaaS ideas need a separate feasibility and trust screen because the product is not only the interface. The output quality, data path, review loop, and cost structure are part of the product.

The AI SaaS Idea Validation Checklist: 15 Gates Before You Build

A weak answer on one gate does not always kill the idea. It should change the next validation task. The mistake is treating vibes, AI-generated confidence, or a polished demo as proof.

Use the table first, then read the short explanations below.

| Gate | Pass evidence | Weak evidence | Next validation task | Stop or narrow signal |

|---|---|---|---|---|

| 1. Specific buyer | One role, workflow, tool stack, and trigger. | Broad segment like "founders" or "sales teams." | Interview only buyers who match the trigger. | Buyer is vague or pain is occasional. |

| 2. Workaround exists | Buyers use software, spreadsheets, BPO, consultants, scripts, Zapier, or manual work. | "No competitors" or "nothing exists." | Map the current workflow and alternatives. | No alternative, workaround, or cost of inaction. |

| 3. SaaS pricing fits | Recurring pain ties to saved time, revenue, cost, risk, or quality. | Free AI novelty or consumer-only usage. | Run pricing conversations or a paid pilot offer. | No budget owner or repeated paid workflow. |

| 4. First 20 buyers are reachable | Specific community, search query, outbound list, marketplace, or partner path. | "We'll run ads later." | Build a list of 20 to 50 qualified prospects. | Requires paid scale immediately. |

| 5. AI is necessary | AI interprets, summarizes, classifies, generates, reasons, or assists judgment. | AI is marketing copy or a chat veneer. | Compare AI vs rules, templates, search, and CRUD. | Normal software would solve it. |

| 6. Output drives action | Output helps decide, approve, route, detect, create, or automate. | "Insights" or "recommendations" with no action. | Define buyer acceptance criteria. | Buyer must redo the work manually. |

| 7. Data is available and permitted | Data source, owner, access path, quality sample, and permission are clear. | Assumes data can be scraped, uploaded, or reused. | Inspect sample data and rights constraints. | Required data cannot be accessed or shared. |

| 8. Quality threshold exists | Measurable bar such as precision, recall, extraction accuracy, review time saved, or buyer acceptance rate. | "It should be pretty accurate." | Define threshold by workflow consequence. | Failure cost is unknown or unacceptable. |

| 9. Evaluation plan exists | Representative samples, expected outputs, edge cases, and failure categories. | A few impressive demos. | Create a small evaluation set before MVP. | You must build first to learn how output is judged. |

| 10. Human review is designed | Review, approval, override, block, and fallback rules are written. | Full automation promised from day one. | Mark which outputs need review. | Mistakes create unacceptable harm or liability. |

| 11. Buyers can trust it | Traceability, confidence flags, permissions, edit history, and review state fit the workflow. | Buyer likes demo but cannot verify it. | Ask what they would need to use it in production. | Buyer cannot control or verify output. |

| 12. Boundaries are clear | Sensitive data, regulated decisions, and excluded MVP actions are named. | "We'll handle compliance later." | Do a primary-source risk screen and expert review if needed. | High-risk use exceeds founder capability. |

| 13. Latency and cost fit | Workflow speed and gross-margin math work under realistic usage. | Ignores retries, long context, tools, or support. | Estimate tokens, requests, retries, latency, and support. | Cost or delay breaks pricing or adoption. |

| 14. More than a wrapper | Owns workflow, data, integrations, evaluation, domain expertise, or distribution. | Generic chat UI or prompt library. | Run a competitor and replacement test. | Buyer can reproduce value in one AI session. |

| 15. MVP tests the riskiest assumption | First version tests buyer urgency, data, quality, trust, pricing, or adoption. | Full AI platform scope. | Cut to one buyer, one workflow, one outcome. | MVP needs many integrations and agents before proof. |

1. A Specific Buyer Has an Urgent Workflow Problem

Define the first buyer by behavior, job, tools, and workflow. "Marketing teams" is too broad. "Lifecycle marketers at B2B SaaS companies with 500 to 5,000 trial signups per month who manually review failed activations every Friday" is testable.

Look for recent behavior: complaints, workarounds, support tickets, manual effort, lost revenue, risk, delays, or budget already spent. If the buyer is fuzzy, use the guide to define the ICP for your SaaS idea before validating AI feasibility.

Stop or narrow if the buyer is vague, the pain is occasional, or the problem is interesting only because AI can touch it.

2. Buyers Already Use a Workaround or Alternative

Alternatives are demand evidence. They can be spreadsheets, BPO, consultants, Zapier workflows, internal scripts, legacy SaaS, outsourced labor, a generic AI tool, or deliberate inaction with a clear cost.

Do not treat "no competition" as good news. It may mean the pain is weak or the buyer has accepted the status quo. Run SaaS competitor analysis before MVP to learn what buyers already trust, hate, and pay for.

Stop or narrow if there is no alternative, no workaround, and no clear cost of doing nothing.

3. The Problem Supports SaaS Pricing

An AI SaaS idea must support recurring revenue, not just curiosity. Tie pricing to saved time, avoided cost, revenue lift, risk reduction, or quality improvement.

Stronger evidence includes current spend, a budget owner, a serious pricing conversation, fake-door checkout, preorder, or paid pilot interest. If pricing is unclear, use the deeper guide to validate SaaS pricing before launch.

Stop or narrow if the idea depends on a low-price consumer audience, has no repeated workflow, or has no buyer who owns budget.

4. You Can Reach the First 20 Buyers Without Paid Scale

The first channel should be specific: a community, search query, direct outreach list, existing audience, marketplace, partner, or niche publication. "Product Hunt" or "paid ads" is not enough.

Before building, write a discovery plan. Who are the first 20 qualified buyers? Where do they gather? What will you ask them? What signal would change the idea?

Stop or narrow if you cannot name where buyers gather or if the idea needs expensive paid acquisition immediately.

5. AI Is Necessary to the Value Proposition

Ask whether rules, templates, search, analytics, automation, or a normal CRUD workflow could solve the problem without AI.

AI is justified when the product must interpret unstructured input, summarize large context, classify messy data, generate buyer-ready drafts, reason over context, or assist judgment at scale. The issue is not that wrappers are always bad. The issue is whether AI meaningfully changes the workflow.

Stop or narrow if AI is only used for marketing copy, a chat veneer, or something that should be a normal form, filter, or report. If your validation is mostly prompt-driven, review why ChatGPT is not a validation process.

6. The Output Has a Clear Decision or Workflow Use

Define what the AI output helps the buyer decide, create, approve, route, detect, or automate. Avoid vague promises like "better insights" unless the insight changes a specific action.

A good acceptance criterion sounds like: "A support lead can approve or edit the suggested tag and route the ticket in under 30 seconds." That is stronger than "The AI recommends the best category."

Stop or narrow if the buyer would still need to redo the work manually because the output is not actionable.

7. The Required Data Is Available, Reliable, and Permitted

Data readiness has three parts.

Data access: where inputs come from, who owns them, and whether APIs, exports, webhooks, or uploads exist.

Data quality: freshness, completeness, duplicates, noisy formatting, missing context, and edge cases.

Data rights: buyer permission, contractual limits, personal data, sensitive data, and third-party restrictions.

This is a risk screen, not legal advice. Stop or narrow if the product depends on data the buyer cannot access, cannot share, or cannot lawfully process for the proposed use.

8. You Can Define a Minimum Quality Threshold

"Good enough" must be defined before building. The right threshold depends on workflow consequence.

A marketing draft can tolerate editing. A finance, legal, healthcare, compliance, security, or employment workflow may require much stricter review. Useful thresholds can include extraction accuracy, classification precision and recall, acceptable false-positive rate, factual accuracy, review time saved, or buyer acceptance rate.

Stop or narrow if you cannot define a measurable quality bar or the cost of failure.

9. You Have an Evaluation Plan Before the MVP

Do not wait until after the MVP to learn how outputs will be judged. Build a small representative evaluation set first.

A lightweight process is enough for early validation: collect sample inputs, create expected outputs or acceptance criteria with buyer or SME review, test multiple prompts or models, record failure modes, and decide whether the result is acceptable. This is not a full production model evaluation harness. It is a pre-build feasibility screen.

NIST's AI Risk Management Framework and Generative AI Profile are useful primary sources for thinking about trustworthiness, evaluation, and AI risk management, but they do not replace product-specific testing.

Stop or narrow if the idea "requires building first" because no one knows what a correct output looks like.

10. Failure Modes Have Human Review and Escalation

AI SaaS products need a plan for uncertainty, low-confidence outputs, harmful suggestions, buyer overrides, and fallback behavior.

Write down when a human reviews, approves, edits, or blocks an output. For some workflows, the first MVP should only suggest or draft. It should not auto-send, auto-delete, auto-approve, or make irreversible decisions.

Stop or narrow if the product promises full automation in a workflow where mistakes create unacceptable cost, liability, or customer harm.

11. Buyers Can Trust the Workflow

Trust is not generic "AI transparency." It is what the buyer needs to feel safe using the workflow.

Potential trust signals include source traceability, citations, confidence flags, edit history, review state, permissions, access controls, data handling clarity, and explainable recommendations. For LLM applications, security risks such as prompt injection and insecure output handling are worth screening against the OWASP Top 10 for LLM Applications before you promise production readiness.

Stop or narrow if the buyer likes the demo but says they could not put it into production because they cannot verify, control, or audit the output.

12. Compliance and Security Boundaries Are Clear

Identify whether the idea touches personal data, sensitive business data, regulated decisions, employment, credit, healthcare, legal, finance, children, or security-critical workflows.

Define what the MVP will not do. For example, it may draft a recommendation but not make a final eligibility decision. It may summarize support tickets but not process sensitive health data. It may flag risk but not provide legal advice.

If the product may be used in the EU or in a regulated context, check the official EU AI Act text and the Council of the EU's AI Act explainer for risk categories and obligations. This article is not legal advice; use expert review for regulated or sensitive workflows.

Stop or narrow if the MVP depends on high-risk data or regulated decisions a solo founder cannot responsibly support.

13. Latency and Cost Fit the Buyer Experience

AI output speed must match the workflow. A batch research report can take minutes. An in-product copilot, routing assistant, or support workflow may need seconds.

Do the unit economics before building:

gross margin = price - model/inference cost - infrastructure cost - support cost - payment/platform fees

For model cost, estimate requests per account, input tokens, output tokens, retries, tool calls, embeddings, storage, batch usage, caching, and any premium routing or data residency requirements. Then check current provider pages such as AWS Bedrock pricing, OpenAI API pricing, and Claude API pricing. Do not hard-code old model prices into the business case.

Stop or narrow if expected model cost or latency makes the product impossible to price, margin, or use in the workflow.

14. The Idea Is Differentiated Beyond an API Wrapper

A wrapper can still be a business. It becomes stronger when it owns the workflow, vertical data, distribution, domain expertise, integrations, trust controls, collaboration, saved artifacts, or a buyer-specific evaluation loop.

Weak differentiation sounds like a generic chat UI, a prompt library, or "ChatGPT for [audience]" with no proprietary workflow. Stronger differentiation sounds like "ticket triage for Shopify app teams using helpdesk history, product docs, tags, resolution patterns, review states, and support lead approval."

Stop or narrow if a buyer could reproduce most of the value with ChatGPT, Claude, Gemini, or a competitor in one session.

15. The MVP Boundary Tests the Riskiest Assumption

The first version should not be a full AI platform. It should test the most uncertain high-impact assumption: buyer urgency, data access, output quality, workflow adoption, willingness to pay, or trust.

Concierge, manual, spreadsheet, no-code, and semi-automated pilots are valid if they test the business and AI risk faster than a full product build. This is especially important for founders using AI coding tools. Answer the questions before vibe coding an app and validate the business before turning the idea into a large build plan.

Stop or narrow if the MVP includes fine-tuning, many integrations, autonomous agents, team collaboration, admin controls, and analytics before proving the core workflow.

How to Score the Checklist: Validate, Narrow, Build a Pilot, or Stop

Do not turn the checklist into a fake precision score. A 73 out of 100 can hide a fatal data-rights problem. A strong demo can hide weak buyer demand. A buyer's enthusiasm can hide unacceptable output risk.

Use the checklist as a decision gate.

| Decision | When to choose it | Next action | What not to do yet |

|---|---|---|---|

| Validate further | Buyer demand is plausible, but evidence is thin or mostly desk research. | Run interviews, workflow walkthroughs, pricing conversations, and data-access checks. | Do not build a product from AI-generated confidence. |

| Narrow | Pain exists, but the buyer, workflow, data source, quality bar, or use case is too broad. | Pick one buyer, one workflow, one data source, and one acceptance criterion. | Do not add personas, features, or integrations. |

| Build a pilot | Buyer pain, data access, quality bar, human review, and willingness to pay are strong enough for a small test. | Run a manual or semi-automated paid pilot with review and explicit stop criteria. | Do not automate irreversible decisions or claim production readiness. |

| Stop | Core assumptions fail, especially buyer urgency, data rights, AI necessity, trust, or unit economics. | Save the artifact, capture what you learned, and compare against another idea. | Do not keep building because the demo looks impressive. |

Technical feasibility without buyer demand is not enough. Buyer interest without reliability and trust is not enough for AI SaaS.

Most early AI SaaS ideas should land in validate further or narrow. That is useful. Narrowing is how you avoid building a broad AI product that cannot earn trust in any one workflow. If you need a separate decision framework, use the guide on when to kill or narrow a startup idea.

Example: Run the Checklist on an AI SaaS Idea

This is a hypothetical example, not a real customer claim, benchmark, or Genhone user result.

Idea: an AI support-ticket triage copilot for small Shopify app teams.

Buyer and workflow: founder-led or support-led Shopify app teams handling enough tickets that routing, tagging, and repeated answers slow product and support work, but not enough volume to justify a large support-ops team.

Current workaround: manual triage in helpdesk software, macros, tags, outsourced support, founder-led support, and ad-hoc Slack messages to product.

AI necessity: classification, summarization, routing, similar-ticket retrieval, and draft response suggestions. AI may be useful because support tickets are unstructured and context-heavy.

| Selected gate | Hypothetical answer | Evidence grade | Next action |

|---|---|---|---|

| Specific buyer | Shopify app teams with 2 to 10 people where founders or support leads still review tickets daily. | Narrow | Exclude large support teams and general ecommerce stores. |

| Workaround exists | Manual tagging, macros, outsourced first-line support, and founder escalation. | Pass | Observe two current triage workflows. |

| SaaS pricing fits | Plausible if it saves support lead time or reduces missed urgent tickets. | Weak | Ask about current helpdesk spend, outsourcing spend, and paid pilot interest. |

| AI necessity | Useful for classification, summarization, routing, and draft response suggestions. | Pass | Compare against deterministic tags and macros. |

| Data access | Needs helpdesk tickets, knowledge base, product docs, tags, historical resolutions, and permissions. | Weak | Ask whether teams can share anonymized exports or connect a sandbox account. |

| Quality threshold | Placeholder threshold: buyer accepts suggested tags and routes for most low-risk tickets after review. | Narrow | Define exact acceptance criteria with a support lead. |

| Human review | AI suggests tags, summaries, and drafts; humans approve customer-facing replies at first. | Pass | Write review and escalation rules for uncertain tickets. |

| Unit economics | Must account for ticket volume, context size, retries, support cost, and provider pricing. | Weak | Estimate usage per account and compare against price bands. |

Decision: likely pilot or narrow.

It becomes a pilot if a support lead has urgent pain, can share sample data with permission, agrees on a quality threshold, and will pay for a manual or semi-automated triage test. It stays narrow if data access is blocked, the buyer will not pay, or the team only wants a nice demo.

The first build should not be live integrations, admin roles, autonomous replies, fine-tuning, analytics, and multi-helpdesk support. A sharper pilot could start with a permissioned export, a small evaluation set, reviewed AI triage suggestions, and a paid manual workflow.

How Genhone Fits Into AI SaaS Idea Validation

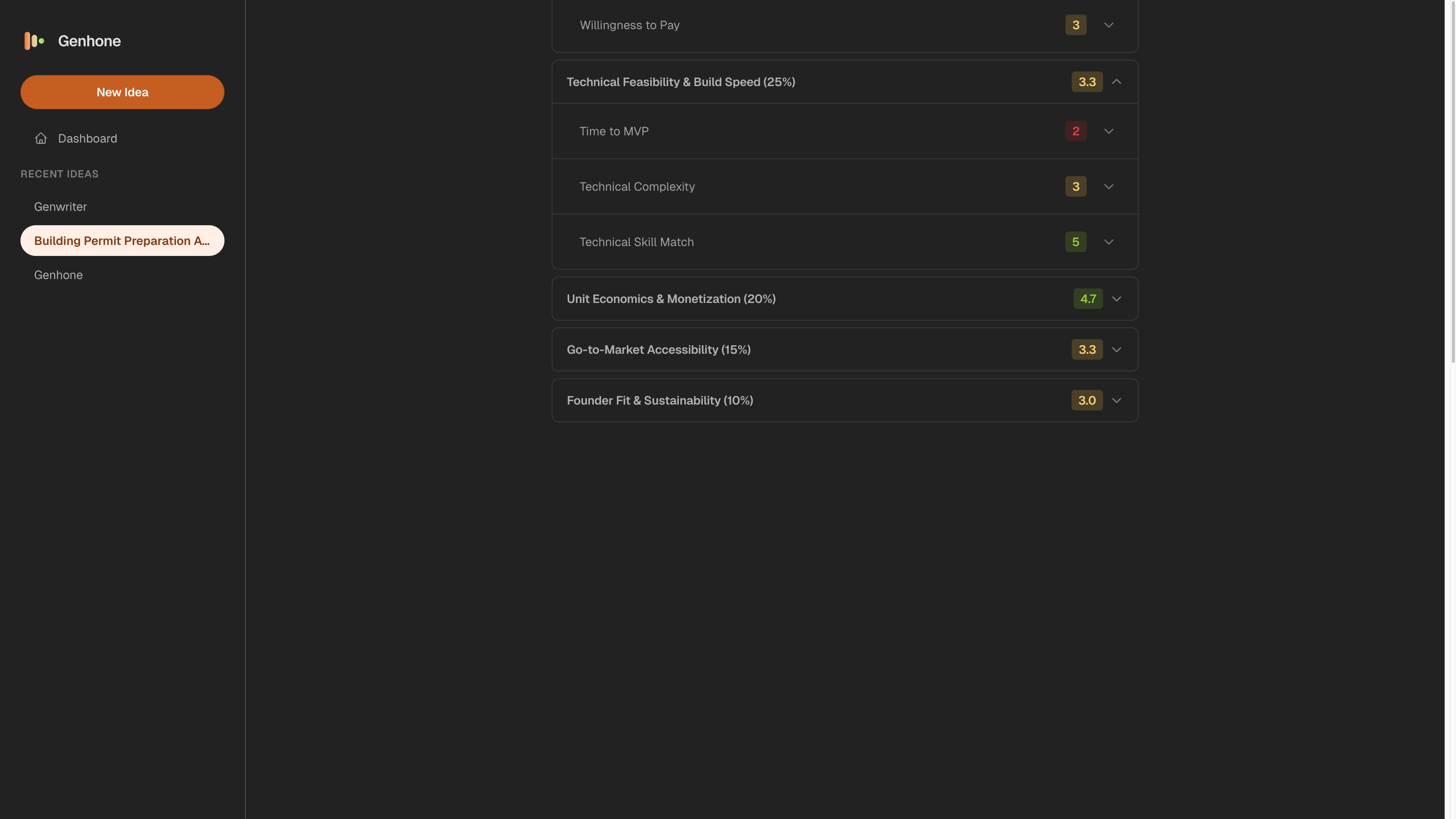

Genhone helps solo founders refine SaaS ideas through 12 guided sections and evaluate them across 18 criteria. It is built for the moment before a founder opens Cursor, Lovable, Bolt, Claude Code, or another AI-assisted build workflow.

For an AI SaaS idea, the relevant refinement sections include problem definition, solution mechanics, customer definition, business model, technical foundation, go-to-market, scope and boundaries, and solo founder execution. The relevant evaluation dimensions include problem validation and demand, technical feasibility and build speed, unit economics and monetization, go-to-market accessibility, and founder fit and sustainability.

Genhone does not prove market demand. It does not run model evaluations, legal reviews, security reviews, or compliance checks. It helps turn assumptions, evidence, risks, and next tests into structured refinement and scored decision artifacts so a founder can compare SaaS ideas before building.

That is useful when the checklist exposes yellow or red areas. AI-specific details can be captured in the technical foundation, scope, and evaluation conversation before the founder commits to a product build.

Use Genhone for structured SaaS idea refinement when you need a saved artifact instead of another chat transcript. For founders using AI coding tools, the related guide on vibe coding startup validation explains why the business decision should come before the build sprint.

FAQ

What is AI SaaS idea validation?

AI SaaS idea validation is the process of checking whether a SaaS idea that depends on AI has a real buyer problem, reachable demand, willingness to pay, necessary data, reliable outputs, acceptable costs, trust controls, and a narrow MVP path before building.

It is different from asking whether the idea sounds impressive. The goal is to decide whether the idea deserves more validation, a narrower pilot, an MVP, or a stop decision.

How is AI SaaS validation different from normal SaaS validation?

Normal SaaS validation checks buyer, pain, alternatives, pricing, distribution, and MVP scope. AI SaaS validation adds AI necessity, data rights, output quality thresholds, evaluation, human review, model cost, latency, trust, and compliance boundaries.

You still need the normal SaaS checks. AI adds the question of whether the product can produce usable, trustworthy outputs under real workflow constraints.

How do I know if my AI SaaS idea is worth building?

It is worth building only after evidence shows a specific buyer has urgent pain, existing alternatives are inadequate, the buyer will pay, AI is necessary, the needed data can be used, outputs meet a defined quality bar, and the first version can test the riskiest assumption cheaply.

If those signals are mixed, build a manual or semi-automated pilot before building a full MVP.

Should I build a demo before validating an AI SaaS idea?

A lightweight demo can help if buyers need to see the workflow. Do not treat the demo as validation.

Before turning the demo into an MVP, validate the problem, data access, quality threshold, trust requirement, and pricing logic. A demo proves that something can be shown. It does not prove that buyers will trust it, pay for it, or use it repeatedly.

Is an AI wrapper a bad SaaS idea?

Not automatically. A wrapper can become a real SaaS business if it owns a specific workflow, vertical data, integrations, distribution, evaluation loop, compliance controls, or buyer outcome.

A generic chat interface with no workflow advantage is weak. The test is whether the buyer gets durable value they could not easily reproduce with a general-purpose AI tool.

How do I validate AI accuracy before building?

Define the workflow consequence, create a small representative sample of inputs, write expected outputs or acceptance criteria, test prompts or models, record failure modes, and decide what requires human review.

Do not claim production accuracy from a few impressive demos. The early goal is to learn whether outputs can meet the buyer's minimum quality bar often enough to justify a pilot.

What are the biggest risks in AI SaaS idea validation?

The biggest risks are validating the demo instead of the buyer problem, lacking rights to the required data, failing to define output quality, ignoring human review, underestimating model cost and latency, overclaiming compliance, and building a generic wrapper buyers can replace with existing AI tools.

The checklist is meant to surface those risks before code, pilots, or positioning make the idea harder to change.