SaaS Idea Validation Checklist

A practical checklist for deciding whether to build, narrow, or kill a SaaS idea before writing code.

A SaaS idea validation checklist should confirm one specific buyer, a repeated painful workflow, current alternatives, willingness to pay, access to the first users, a narrow MVP, realistic distribution, founder fit, and clear stop criteria before you build.

Use this SaaS idea validation checklist before you open Cursor, Lovable, Bolt, Claude Code, or another AI-assisted building tool. The goal is to see which assumptions have evidence, which ones need a narrower test, and which ones should make you stop. For the fuller 12-decision framework behind this checklist, read how to validate a SaaS idea before building.

SaaS Idea Validation Checklist: Quick Version

Download the SaaS idea validation checklist worksheet (DOCX).

Use the worksheet to grade each row red/yellow/green, capture evidence notes, and choose the next action. Weak signals should lead to a narrower idea or a focused test, not more features.

| Check | Pass Condition | Weak Signal | Evidence to Collect |

|---|---|---|---|

| 1. First buyer | One role, context, and trigger. | Broad segment such as "startups" or "small businesses." | List of 20 reachable buyers. |

| 2. Painful workflow | Repeated workflow tied to money, time, risk, or revenue. | General annoyance. | Interview notes, community threads, support/review complaints. |

| 3. Urgency trigger | Clear moment that makes the buyer act now. | "It would be nice." | Recent stories of the pain happening. |

| 4. Current alternative | Buyer already uses software, spreadsheets, agencies, scripts, manual work, or deliberate inaction. | No workaround found. | Alternative list and observed workflow. |

| 5. Alternative gap | Repeated complaint with current options. | Competitors are dismissed without evidence. | Review mining and competitor teardown notes. |

| 6. First 20 users | Named channels where the founder can reach qualified buyers directly. | "Ads later" or vague community plan. | Outreach list, community map, founder network list. |

| 7. Willingness to pay | Current spend, paid pilot, preorder, procurement step, or strong value math. | Compliments or likes. | Pricing conversations, paid test, manual offer response. |

| 8. MVP boundary | One buyer, one workflow, one promised outcome. | Platform scope or many personas. | MVP in/out list. |

| 9. Market or timing signal | Search, community, platform, regulation, budget, or competitor momentum supports the wedge. | TAM claim only. | Search data, trend evidence, community activity, competitor movement. |

| 10. Distribution fit | Channel matches how the buyer discovers and buys tools. | Channel chosen because it sounds scalable. | Channel hypothesis and first campaign plan. |

| 11. Founder fit | Access, lived pain, domain credibility, technical edge, or distribution edge. | "I can build it." | Founder advantage statement. |

| 12. Stop criteria | Written conditions that would make the founder stop. | No kill rule. | Kill/narrow/build thresholds. |

How to Use This Checklist Without Fooling Yourself

The checklist is a decision tool, not a formality. Mark an item as passing only when the evidence comes from the target buyer, their current behavior, or a real market signal.

Use three grades:

| Grade | Meaning | Next move |

|---|---|---|

| Green | Real evidence from the target buyer supports the check. | Keep going or build only if the full checklist is strong. |

| Yellow | Some signal exists, but the buyer, evidence, or implication is fuzzy. | Narrow the audience or run one focused test. |

| Red | The answer is speculative, broad, or contradicted by evidence. | Do not build. Fix this assumption or kill the idea. |

Most early ideas should become narrower after this exercise. That gives you clearer interviews, pricing tests, and MVP scope.

Y Combinator's startup advice emphasizes talking to users and learning directly from customers. Paul Graham's "Do Things That Don't Scale" makes the same practical point: learn manually before scaling.

Problem and Buyer Checks

1. Who is the first buyer?

Name one role, one context, and one trigger. "SaaS founders," "small businesses," and "marketers" are too broad because they do not tell you who owns the pain.

A stronger answer is: "Solo Shopify operators with 300-2,000 monthly orders managing retention without a dedicated lifecycle marketer." That buyer is narrow enough to find and interview.

2. What painful workflow repeats?

Repeated workflow pain is stronger than broad problem language. SaaS retention usually needs a recurring job.

Ask about the last time the workflow happened. What did the buyer do? Which tools or manual steps were involved? What breaks when it is done badly?

3. What makes the pain urgent now?

Urgency separates "useful" from "worth buying." Look for a trigger such as revenue loss, a missed deadline, churn risk, compliance exposure, support backlog, or manual reporting deadline.

If the buyer cannot describe a recent moment when the pain mattered, the idea may still be interesting, but it is not ready for product work.

Alternative and Market Checks

4. What do buyers use today?

Current behavior is stronger than stated interest. Buyers may use existing software, spreadsheets, agencies, internal scripts, manual processes, or deliberate inaction.

Doing nothing is still information. It can mean the pain is weak, the budget owner is unclear, or the current options are not trusted.

5. Why are current alternatives not good enough?

Competitors are demand evidence, not automatic bad news. The useful question is why a specific buyer keeps struggling despite available options.

Look for repeated complaints about complexity, price, missing workflow support, integrations, trust, onboarding, or fit. Review mining on G2, Capterra, Product Hunt, the Shopify App Store, Reddit, and Indie Hackers can reveal patterns, but one angry post is not a market.

6. What market or timing signal supports the idea?

A timing signal explains why this wedge can work now. Useful signals include search demand, community volume, platform shifts, regulation, budget changes, competitor momentum, or new tool behavior.

Do not rely on generic TAM claims. A large market does not prove urgency, budget, or a reason to switch.

Pricing and Distribution Checks

7. What would buyers pay for?

Tie pricing to avoided pain or measurable gain. Stronger signals include a paid pilot, preorder, existing spend, serious procurement step, or a value-based pricing conversation.

Weak signals include compliments, likes, and waitlist signups from people who are not the buyer. If the buyer cannot connect the idea to money, time, risk, or revenue, keep testing.

8. Can you reach the first 20 users?

Before scalable acquisition, prove direct access. List 20 named or reachable buyers and the channel you will use to reach them.

Relevant early channels can include Reddit, X, Indie Hackers, niche Discords, Slack groups, newsletters, founder networks, or direct outbound. The channel matters less than whether qualified buyers are there.

9. Does distribution match buyer behavior?

Search works when buyers actively look for answers. Community and outbound work when the buyer is specific and reachable. Marketplaces work when buyers already shop there.

A channel is not validated because it is popular. It is validated when it matches how this buyer discovers, trusts, and buys tools.

MVP and Founder-Fit Checks

10. Is the MVP narrow enough?

The first MVP should test one buyer, one workflow, and one promised outcome. Write an in-scope and out-of-scope list before you build.

Fast building still wastes time if the scope tests the wrong assumption. Avoid platform scope, multiple personas, dashboards, integrations, and automation layers unless they test the core workflow.

11. Why are you the right founder for this?

Founder fit can come from lived pain, access, domain knowledge, technical edge, distribution edge, or learning speed.

"I can build it" is not founder fit. The question is why you can learn this market, reach this buyer, or solve this workflow faster.

12. What evidence would make you stop?

Write kill criteria before product work. Examples: no repeated pain in five to ten interviews, unreachable buyers, no budget owner, no wedge against alternatives, or an MVP that cannot be narrowed.

Stop criteria protect you from sunk cost. If the stop rule is met, save the artifact and move to a stronger idea.

What Counts as Good Evidence?

Evidence should match the checklist item. Interviews validate pain. Paid tests validate willingness to pay. Channel tests validate distribution. Review mining validates alternative gaps when complaints repeat across the same buyer type.

Use thresholds as examples, not universal rules.

| Evidence strength | Examples | How to treat it |

|---|---|---|

| Strong | Paid pilot, preorder, repeated buyer interviews, current spend, manual pilot success, repeated review complaints from the same buyer type. | Can support a build or narrow decision. |

| Medium | Qualified waitlist, pricing-page engagement from relevant traffic, community posts, repeated problem language, competitor momentum. | Useful, but usually needs a follow-up test. |

| Weak | Friend feedback, social likes, broad survey interest, generic AI score, unqualified waitlist, TAM claims. | Do not build from this alone. |

The strongest evidence changes your next action. Evidence that only makes you feel better is not strong enough.

Example: Run the Checklist on a SaaS Idea

Example idea:

An AI onboarding assistant for lean Shopify stores that turns first purchases into repeat customers.

The original idea sounds plausible, but it is too broad. Shopify stores vary by category, order volume, marketing maturity, and budget. The biggest risks are willingness to pay and direct access.

| Checklist item | Answer | Grade | Next action |

|---|---|---|---|

| First buyer | Solo Shopify operators with 300-2,000 monthly orders and no dedicated lifecycle marketer. | Yellow | Interview only stores in this range; exclude agencies and larger teams. |

| Painful workflow | Turning first-time buyers into repeat buyers through follow-up offers and lifecycle emails. | Yellow | Ask for the last three retention campaigns and where the work broke. |

| Urgency trigger | Repeat purchase rate drops after a paid acquisition push. | Yellow | Look for recent stories tied to wasted ad spend or weak second-order rate. |

| Current alternative | Klaviyo templates, spreadsheets, freelancer help, or no structured follow-up. | Green | Observe the current workflow and where it fails. |

| Alternative gap | Existing tools are powerful but require strategy the operator lacks. | Yellow | Mine Shopify App Store and community complaints for repeated setup or strategy gaps. |

| First 20 users | Ecommerce founder communities, Shopify Slack groups, and outbound to stores with visible repeat-purchase offers. | Yellow | Build a list of 50 qualified stores before product work. |

| Willingness to pay | Possible if framed around repeat revenue, but not proven. | Red | Offer a paid manual onboarding audit. |

| MVP boundary | One assistant that drafts the first repeat-purchase sequence from store and order context. | Yellow | Exclude full lifecycle automation, analytics, and campaign calendars. |

Verdict: narrow.

The next test is not a product sprint. Run eight to ten interviews with narrowly qualified Shopify operators, then offer a paid manual onboarding audit. If nobody wants the manual outcome, do not build.

Build, Narrow, or Kill: How to Read Your Checklist

Most early ideas should land in narrow. That is productive. It means the pain may be real, but the buyer, wedge, pricing, distribution, or MVP boundary still needs sharper evidence.

| Verdict | Use when | Next action |

|---|---|---|

| Build | Buyer, pain, alternative gap, willingness to pay, first-user access, MVP scope, and founder fit are all green or mostly green. | Build the narrowest version that tests one workflow. |

| Narrow | Pain exists, but buyer, wedge, pricing, distribution, or MVP boundary is yellow. | Tighten the audience or run one focused validation test. |

| Kill | Pain is weak, the buyer is unreachable, alternatives are good enough, or pricing cannot work. | Save the artifact and move to a stronger idea. |

Do not build when pricing or distribution are red. Those decide whether a usable product can become a business.

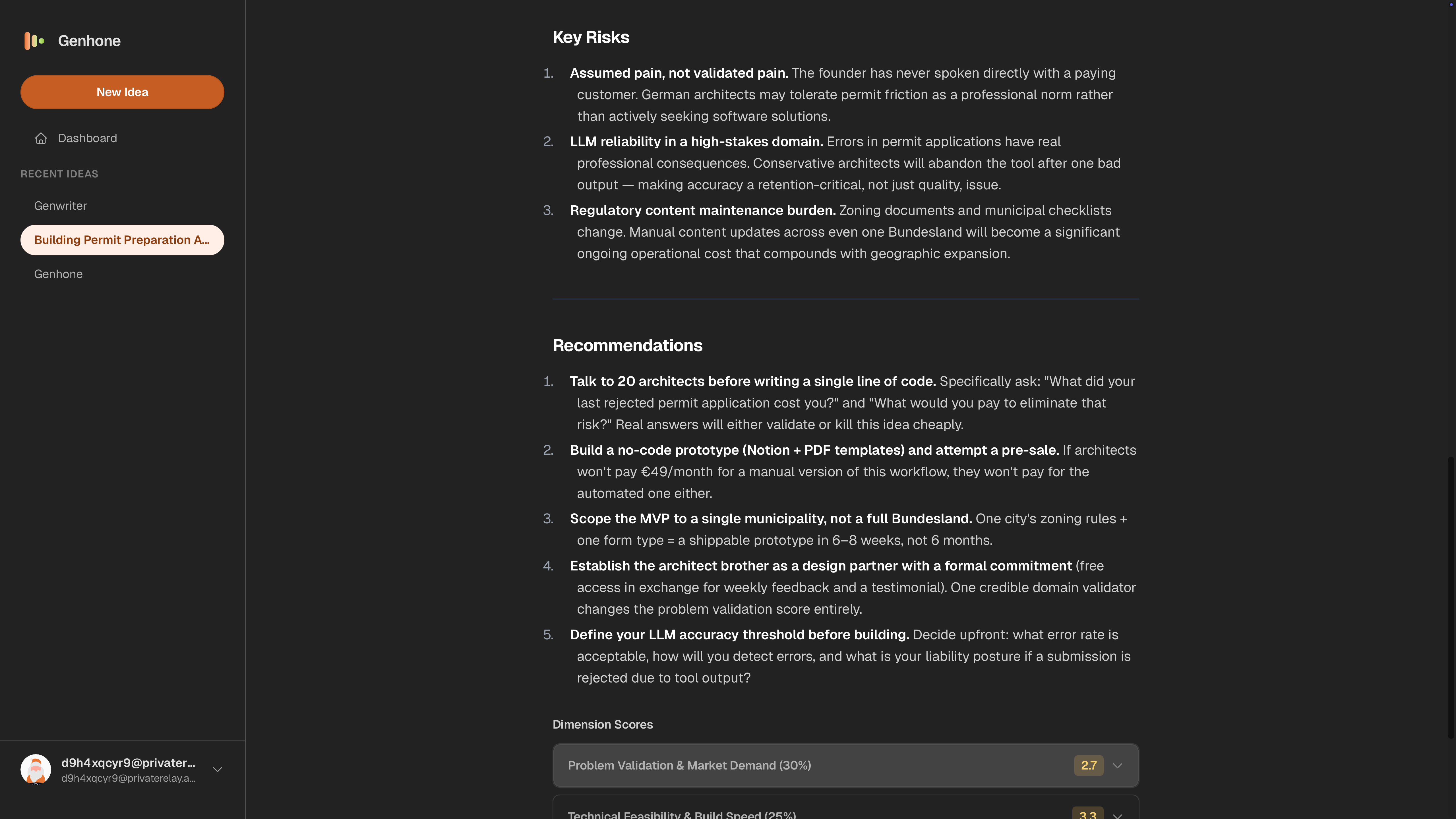

How Genhone Fits Into This Checklist

Genhone turns the checklist into a guided refinement process across 12 sections. It avoids the blank-chat problem by enforcing structure, then evaluates ideas across automated and founder-fit checks.

The output is a saved idea artifact you can compare against other plausible ideas. That matters when only one has a reachable buyer, real pain, and a narrow test path.

Use Genhone when the checklist exposes yellow or red areas and you need a structured refinement workflow before building. It is most useful before opening Cursor, Lovable, Bolt, Claude Code, or another AI-assisted building workflow.

Genhone does not validate an idea by itself. It turns assumptions, evidence, risks, and next tests into scored idea artifacts instead of leaving the thinking scattered across chat threads.

FAQ

What should be in a SaaS idea validation checklist?

It should cover the first buyer, painful workflow, urgency, current alternatives, alternative gaps, first-user access, willingness to pay, MVP boundary, timing signal, distribution fit, founder fit, and stop criteria.

How do I know if my SaaS idea is validated?

Interest alone does not validate an idea. It is stronger when a reachable buyer has repeated pain, weak alternatives, credible payment logic, and an MVP that tests one workflow.

How many interviews do I need to validate a SaaS idea?

Five to ten narrow buyer conversations can expose patterns, but the number matters less than repeated pain, workarounds, and buying logic.

Can I use ChatGPT to validate a SaaS idea?

ChatGPT can structure assumptions, draft questions, and summarize research. It cannot prove buyer urgency, willingness to pay, or distribution access.

Should I build an MVP before completing the checklist?

No, not if major assumptions are still red. Use the checklist first to decide whether to build, narrow, manually test, or abandon the MVP.

What is the biggest validation mistake SaaS founders make?

The biggest mistake is treating a plausible product idea as evidence. Test buyer pain, alternatives, pricing, and distribution before product scope expands.