Validate Your App Idea Before Building With AI

A pre-prompt validation brief for founders using Cursor, Lovable, Bolt, v0, Codex, or Claude Code.

To validate an app idea before building with AI, define the first buyer, confirm a repeated painful workflow, identify current alternatives, test willingness to pay, choose a realistic first distribution channel, narrow the MVP to one outcome, and write stop criteria before prompting an AI coding tool. AI can help organize research, but real validation comes from buyer behavior, interviews, competitor evidence, and pricing signals.

After shipping multiple apps and using LLM-powered development workflows, the pattern is clear: AI makes it easier to build the first version, but it also makes it easier to skip the hard buyer questions.

The useful output is not a prettier prompt. It is a pre-prompt validation brief: a short business-context artifact that tells you whether the idea has earned a build, what the first AI-assisted build should test, and what evidence would make you narrow or stop.

Why AI Makes App Idea Validation More Important

AI coding tools compress the time between idea and working prototype. That is useful for solo founders and technical builders. It also changes the validation problem: when a product can feel real in a weekend, weak assumptions can survive longer because visible progress feels like evidence.

A working app does not validate the buyer, pain, pricing, distribution, or willingness to pay. It validates that you can produce software. Those are different jobs.

That distinction matters before you open Cursor, Lovable, Bolt, v0, Codex, Claude Code, or any similar workflow. A detailed build prompt can still encode the wrong customer, the wrong pain, the wrong MVP, or a pricing assumption nobody has tested. The pre-build artifact should exist before the product requirements document (PRD), prompt, user stories, database schema, UI screens, or first generated code.

YC's startup advice still emphasizes launching, talking to users, and iterating based on real customer feedback, not scaling a guess too early. Paul Graham's "Do Things That Don't Scale" makes the same early-stage point from another angle: founders often need to recruit and learn from users manually before systems can scale.

For the broader SaaS version of this framework, see our guide to validating a SaaS idea before building.

What a Pre-Prompt Validation Brief Is

A pre-prompt validation brief is the business-context document you complete before asking an AI coding tool to generate an app. It captures the buyer, painful workflow, current workaround, pricing logic, distribution path, MVP boundary, and stop criteria so the first build prompt tests a real assumption instead of a vague product idea.

It is not a PRD. A validation brief answers whether the idea deserves a build and what the first build should test. A PRD answers what should be built after the idea is sharp enough.

That order matters. If you generate user stories, implementation tasks, database tables, and screen states before you understand the buyer and pain, the output can look professional while the business remains fragile. AI can help draft and refine the validation brief, but the founder must bring evidence from interviews, current workflows, competitor reviews, pricing conversations, manual pilots, or real distribution tests.

Use the distinction this way:

| Artifact | Best used for | Wrong use |

|---|---|---|

| Raw idea | Capturing initial excitement | Treating it as build-ready |

| Pre-prompt validation brief | Deciding whether and what to build | Pretending uncertainty is gone |

| PRD | Specifying the narrow validated version | Covering up weak buyer or pain assumptions |

| AI build prompt | Generating or modifying implementation | Asking AI to build a product nobody has validated |

The brief should be plain enough to fit on one page. If it gets long, that usually means the idea is still too broad or the evidence is not separated from speculation.

The 7 Questions to Answer Before You Build With AI

Use these seven fields as the core of the pre-prompt validation brief. The goal is not to remove all risk. The goal is to make the riskiest assumption visible before the build sprint starts.

| Brief field | Question to answer | Weak answer | Stronger answer | Evidence to collect before coding |

|---|---|---|---|---|

| First buyer | Who has the pain and when do they feel it? | "Consumers" or "founders" | A role, context, and trigger moment | 5-10 narrow interviews, community examples, named reachable users |

| Repeated painful workflow | What workflow breaks often enough to matter? | "This would be useful" | A repeated task tied to time, money, risk, or revenue | Last-time stories, screenshots, current workflow notes |

| Current workaround | What do they use today? | "Nothing exists" | Spreadsheet, manual work, tool stack, agency, script, or deliberate inaction | Competitor reviews, workflow walkthroughs, forum threads |

| Willingness to pay | Why would someone pay instead of just try it? | "They said they like it" | Existing spend, time cost, paid pilot, preorder, or budget owner | Pricing conversations, current spend proof, manual service test |

| First distribution path | Where will the first 20 users come from? | "Launch on Product Hunt" | A reachable list, community, search intent, outbound segment, or founder network | List of targets, community rules, outreach responses |

| MVP boundary | What is the smallest build that tests the riskiest assumption? | "Dashboard plus automations plus AI chat" | One buyer, one workflow, one outcome | Manual prototype, concierge test, mock flow, narrowed feature list |

| Stop criteria | What evidence would stop or narrow the build? | No explicit rule | Written invalidation thresholds | Interview patterns, no budget owner, no reachable channel, no repeated pain |

1. Who is the first buyer?

A useful AI prompt needs a specific user. Validation needs a specific buyer.

In B2B workflows, the buyer may not be the end user. The person who feels the daily workflow pain might not own the budget, approve software, or care enough to change tools. Your brief should name who has the pain, who can say yes, and what trigger makes the problem urgent.

A good answer has this format: role + context + trigger.

For example: "Solo Shopify operators with 300-2,000 monthly orders who see repeat purchase rate drop after a paid acquisition push."

That is much stronger than "ecommerce founders" because it tells you who to interview, where to look for evidence, what current workflows to inspect, and what a first product should ignore.

2. What painful workflow repeats?

AI can build features quickly, but repeated workflow pain creates retention. A one-time annoyance may produce curiosity. A recurring workflow tied to time, money, risk, or revenue can produce urgency.

Do not ask only, "Would this be useful?" Ask for last-time stories. What happened the last time the workflow broke? What did they do? Which tools were open? Who got involved? What did it cost in time, money, missed revenue, or stress?

Avoid vague pain like "people need productivity." A stronger answer sounds like a workflow: "Every Friday, the founder exports overdue invoices, checks email threads, and writes uncomfortable follow-up messages by hand."

3. What do they use today?

Current alternatives prove that the buyer already spends effort. Those alternatives can be spreadsheets, internal scripts, agencies, manual work, existing software, or deliberate inaction.

"No competitors" is usually not a positive signal. It is an investigation prompt. Maybe the market is overlooked. Maybe the pain is weak. Maybe buyers solve it through a workflow that does not look like software. Maybe the buyer has decided the problem is annoying but not worth paying to fix.

Look for current behavior before you ask AI to build anything. Interview the buyer through the workflow. Read recent competitor reviews. Search niche forums and communities. The best evidence is not that your idea sounds novel. It is that buyers already spend time or money because the status quo hurts.

4. What would make them pay?

Validation is weak if it only proves curiosity. People can like an idea, join a waitlist, or compliment a demo without having budget, urgency, or intent.

Payment logic should connect to avoided pain, revenue gained, risk reduced, or time saved. Stronger signals include current spend, a paid pilot, a preorder, a budget owner who can explain procurement, or a manual service test where someone pays for the outcome before the software exists.

For AI-built apps, the low cost of building makes this easier to skip. Do not skip it. Cheap implementation still costs attention, maintenance, support, positioning work, and opportunity cost. If nobody can explain why the problem is worth paying for, the build prompt is early.

5. How will you reach the first 20 users?

Distribution is part of validation, not a post-launch task.

The first channel should match the buyer's behavior. If the buyer searches during the pain, search content might work. If the buyer trusts peers, communities and referrals may matter. If the buyer is a narrow B2B role, founder-led outbound may be the fastest learning path.

Before coding, name where the first 20 qualified conversations or users will come from. A reachable list, a specific community, a founder network, or a narrow outbound segment is stronger than "launch on Product Hunt." If you cannot name a realistic path to the first 20 users, the idea is not ready for an AI build prompt.

6. What should the AI build first?

The MVP should test the riskiest assumption, not demonstrate every imagined feature.

A narrow prompt usually beats a large PRD while the idea is still uncertain. The useful question is not "What could the app become?" It is "What is the smallest thing we can build or simulate to test whether this buyer cares about this workflow enough to change behavior?"

Sometimes the right answer is not software yet. If the workflow can be tested manually, use a concierge test, spreadsheet, mock flow, or manual prototype first. Build once the brief tells you which assumption the software must test.

7. What would make you stop?

Stop criteria must be written before momentum begins. Once a prototype exists, every weak signal can start to look like a reason to keep going.

Good stop criteria are concrete. Examples:

- No repeated pain appears in eight narrow interviews.

- The user feels the pain, but no buyer owns the budget.

- The current workaround is good enough.

- The founder cannot reach a credible first channel.

- The MVP cannot be narrowed to one buyer, one workflow, and one outcome.

The goal is not pessimism. The goal is avoiding sunk cost. A stopped idea that leaves behind a clear artifact is still useful, because it improves the next idea.

What AI Can Help With, and What It Cannot Validate

AI is useful for structure, synthesis, ideation, and research organization. It can turn messy notes into clearer assumptions, draft interview questions, summarize competitor reviews, and help you compare possible ICPs.

That does not make the output validation evidence. A chat answer, market-size estimate, or generated score can suggest what to investigate. It cannot prove buyer urgency, willingness to pay, or distribution access without real-world behavior.

| AI can help with | AI cannot prove |

|---|---|

| Drafting interview questions | Buyer urgency |

| Summarizing competitor reviews | Willingness to pay |

| Generating ICP hypotheses | Whether the ICP is reachable |

| Creating landing-page copy variants | Whether traffic is qualified |

| Turning notes into a sharper brief | Whether people will change behavior |

| Drafting a narrow PRD after validation | Whether the idea deserves the build |

Treat AI output as hypotheses until checked. If an AI tool says the market is growing, verify the claim. If it lists competitors, inspect the products yourself. If it suggests pricing, compare that suggestion with buyer conversations and current spend.

The point is not that ChatGPT or other AI tools cannot help. They can. The point is that a structured answer is not the same as evidence. Validation begins when a specific buyer behaves in a way that confirms the pain, budget, access, or workflow.

Bad Prompt vs Validated Prompt

Consider this rough idea:

An AI app that helps freelancers manage late client payments.

A weak AI build prompt might look like this:

Build me a SaaS app for freelancers to track invoices, send reminders, and show analytics. Make it modern and include Stripe.

The prompt is not weak because the app is impossible. It is weak because the business context is missing.

- "Freelancers" is too broad.

- The pain and trigger are unclear.

- Current alternatives are not named.

- Pricing and distribution assumptions are missing.

- The MVP has too many features.

A stronger first prompt after a validation pass should not ask AI to build everything at once. It should give the AI the validation context, ask it to preserve the narrow scope, and turn the brief into the next small build decision.

Use the validation brief below to plan the first AI-assisted MVP. Do not build the full product yet.

Validation brief:

- First buyer: freelance designers with 3-10 active retainers who manually chase late client payments.

- Trigger: an invoice is 7+ days overdue after project delivery or monthly retainer work.

- Current workaround: the freelancer tracks invoice status in a spreadsheet, checks accounting software, and writes awkward reminder emails manually. Accounting software records invoices, but it does not handle tone-safe follow-up.

- Wedge: focus on payment status and client follow-up sequences, not full accounting.

- Candidate MVP boundary: enter or import invoices, detect overdue status, draft three reminder emails in different tones, and track which reminders were sent.

- Distribution assumption: start with freelance designer communities and direct outreach to designers who sell monthly retainer work.

- Stop criteria: do not build if interviews show accounting tools already solve the follow-up pain, or if freelancers avoid paying for this workflow.

Task:

1. Identify the riskiest assumption this first build should test.

2. Propose the smallest product slice that tests that assumption.

3. List what should be mocked, handled manually, or left out.

4. Return a build plan with no more than five implementation steps.

5. Flag any missing information before writing code.

This is still not "fully validated." It is also not the only prompt you should use. It is a better starting prompt because the first validation pass has narrowed the buyer, trigger, workaround, wedge, MVP, channel, and stop criteria before asking AI to plan the build.

From there, break the work into smaller prompts: one to turn the plan into a PRD or issue list, one to implement the first slice, one to review edge cases and tests, and one to tighten copy or onboarding after the prototype exists.

The difference is practical. The bad prompt asks AI to invent product strategy while building software. The stronger prompt gives AI a validation-backed brief and asks it to protect scope before implementation starts.

The Build, Narrow, or Stop Decision

The validation brief should end with a decision before the AI build sprint starts. Use this matrix after the first evidence pass:

| Verdict | Use when | Next action before AI coding |

|---|---|---|

| Build | Buyer, pain, workaround, payment logic, first channel, and MVP boundary are credible. | Ask AI to build the narrowest version that tests one workflow. |

| Narrow | Pain seems real, but buyer, pricing, wedge, or distribution is still fuzzy. | Tighten the brief and run one more focused validation test. |

| Stop | Pain is weak, buyer is unreachable, alternatives are good enough, or nobody owns budget. | Save the artifact and move to a stronger idea. |

Most early ideas should land in narrow. That is not failure. It is progress. Narrowing improves the eventual AI build prompt by removing broad audiences, vague workflows, and untested feature lists.

Write the verdict before implementation starts. Once a prototype exists, the question changes from "Should this be built?" to "How do I make this thing work?" That shift can be useful later. It is dangerous too early.

How Genhone Fits Before the First AI Build Prompt

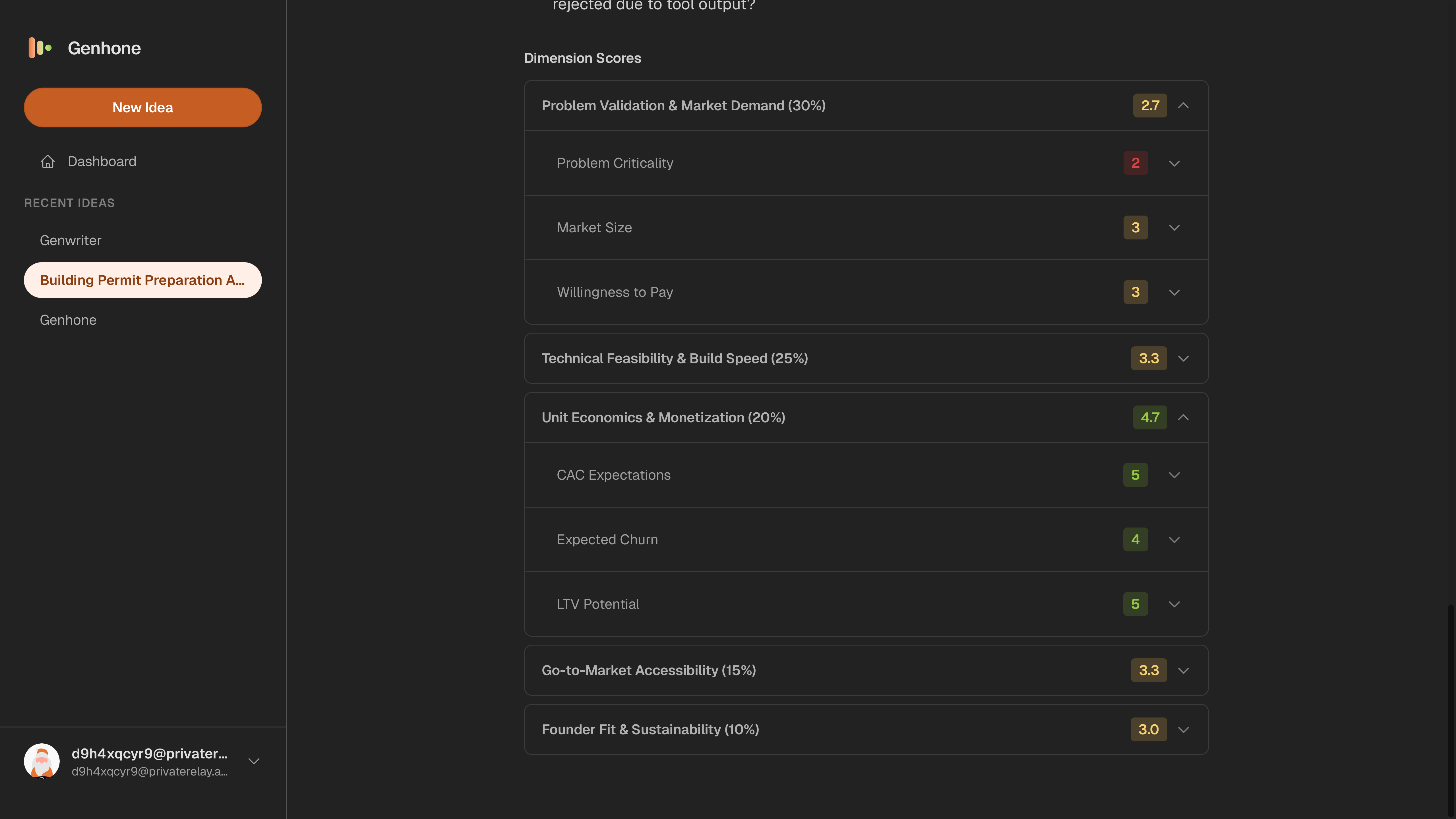

Genhone helps solo founders turn rough ideas into structured refinement artifacts before implementation starts. It gives founders a structured idea validation workflow for the decisions that should come before a PRD or AI coding prompt.

Instead of leaving the idea in a blank chat, Genhone guides the founder through refinement, evaluates ideas across automated criteria and founder-fit checks, and saves the artifacts so ideas can be compared, revisited, narrowed, or killed before build time is committed.

That makes Genhone useful before generating a PRD or prompting an AI coding tool. The goal is not to prove an idea will work. The goal is to make the first build prompt less likely to encode weak buyer, pain, pricing, distribution, or scope assumptions.

Turn your rough app idea into a structured validation artifact before the first build sprint.

FAQ

Can AI validate my app idea?

AI can help structure assumptions, summarize research, draft interview questions, and compare alternatives. It cannot prove buyer urgency, willingness to pay, or distribution access without real-world evidence.

Should I build a prototype before validating the app idea?

Build only when a prototype is the cheapest way to test the riskiest assumption. If interviews, review mining, a manual pilot, or a landing-page test can answer the question first, do those before coding.

What should I do before opening Cursor, Lovable, Bolt, v0, Codex, or Claude Code?

Write a pre-prompt validation brief covering the first buyer, painful workflow, current workaround, payment logic, first channel, MVP boundary, and stop criteria.

Is a PRD enough to validate an app idea?

No. A PRD specifies what to build. Validation decides whether the idea and scope deserve a build. A PRD without buyer and pain evidence can make weak assumptions look organized.

How many users should I talk to before building with AI?

Five to ten narrow conversations can reveal useful patterns if the buyer group is specific. If answers vary widely, narrow the audience before building.

What is the biggest mistake founders make when using AI to build apps?

They treat faster implementation as validation. AI can reduce build time, but it does not prove that a reachable buyer has an urgent problem or will pay for the result.