How to Validate a SaaS Idea Before Building

A 12-decision framework for solo founders before they commit engineering time.

To validate a SaaS idea before building, define one specific buyer, confirm a painful recurring problem, identify current alternatives, test willingness to pay, check distribution access, narrow the MVP, and decide what evidence would make you build, narrow, or stop. The goal is not certainty. It is enough evidence to avoid building a product nobody wants.

What SaaS Idea Validation Means Before You Build

SaaS idea validation is the process of turning excitement into evidence before you spend serious engineering time. The question is not "Can I build this?" It is "Does a reachable buyer have a painful, repeated problem that makes this worth buying?"

There are two levels to separate early:

- Problem validation: real people have the pain, experience it often, and already spend time, money, or attention trying to solve it.

- Solution validation: your proposed product is a believable way to solve that pain better than the current workaround.

Before building, problem validation matters most. A clickable prototype or polished landing page can make a weak idea look convincing. A better signal is when the buyer describes the workflow without being prompted, complains about current alternatives, and can explain what the problem costs.

Validation reduces risk. It does not guarantee success. The useful output is a build, narrow, or kill decision you can defend with evidence.

Why Fast Building Makes Validation More Important

AI-assisted coding tools, reusable starter stacks, hosted databases, payments APIs, and component libraries have compressed the time between "idea" and "working product." For technical solo founders, that is useful. It also creates a trap: when building feels cheap, weak assumptions survive longer than they should.

Fast building does not validate the buyer, the pain, the pricing, or the distribution channel. It only proves that implementation is possible.

Y Combinator's startup advice still pairs building with talking to users and learning directly from them. Paul Graham's "Do Things That Don't Scale" makes the same early-stage point: founders often need to recruit and learn from users manually before scaling acquisition. That matters more when software gets easier to ship.

That is why this guide uses a decision framework instead of a generic validation checklist. A checklist can make you feel productive. A decision framework forces you to say which assumptions are strong, which are weak, and what evidence would change your mind.

The 12 Decisions to Make Before Building a SaaS Idea

Use these 12 decisions as the artifact you complete before writing production code. The goal is not to make every row perfect. The goal is to reveal where the idea is strong enough to build, where it needs narrowing, and where it should stop.

| Decision | What Good Looks Like | Weak Signal | Build / Narrow / Kill Implication |

|---|---|---|---|

| 1. First buyer | A specific role in a specific context with a trigger moment. | "Small businesses," "founders," or "marketers." | Build only when one buyer clearly owns the pain; narrow broad audiences. |

| 2. Repeated workflow | A weekly or monthly workflow tied to retention, revenue, risk, or wasted time. | A vague annoyance or one-off task. | Build repeated pain; narrow occasional pain; kill novelty-only ideas. |

| 3. Urgency trigger | A moment that makes the buyer actively seek change. | "They would benefit from it." | Build when urgency is visible; narrow until the trigger is clear. |

| 4. Current alternative | Existing software, spreadsheets, agencies, scripts, manual work, or doing nothing for a reason. | No known workaround. | Build when workarounds are painful; investigate if nobody is trying. |

| 5. Alternative gap | Repeated complaints about price, complexity, missing workflow, integrations, or fit. | "Competitors are old" without proof. | Build a wedge; narrow if the complaint is generic; kill if alternatives are good enough. |

| 6. First 20 users | Named communities, outbound lists, founder network, or niche channels. | "We'll run ads later." | Build when access is plausible; narrow until users are reachable. |

| 7. Willingness to pay | Current spend, time cost, paid pilot, preorder, or serious pricing engagement. | Compliments, likes, or "I'd use this." | Build with payment logic; narrow if value is unclear; kill if nobody owns budget. |

| 8. MVP boundary | One buyer, one painful workflow, one outcome. | Platform, marketplace, or multi-person workflow on day one. | Build narrow; narrow scope aggressively before coding. |

| 9. Market or timing signal | Search demand, community pain, platform shift, regulatory change, or competitor momentum. | TAM slides and broad trend claims. | Build when timing supports the wedge; narrow unsupported trends. |

| 10. Realistic distribution | A channel that matches how the buyer discovers tools. | Channel chosen because it sounds scalable. | Build when channel and buyer match; narrow if distribution is wishful. |

| 11. Founder fit | Access, lived pain, technical edge, distribution edge, or domain credibility. | "I can build it." | Build when you have an unfair learning advantage; narrow around your access. |

| 12. Stop criteria | Written conditions that would make you stop before sunk cost grows. | No kill rule. | Build only with clear invalidation rules; kill when those rules are met. |

1. Who is the first buyer?

Start with one buyer, not a market segment. "Small businesses" is not a buyer. "Founders" is not a buyer. A good answer includes role, context, and trigger, such as: "Solo Shopify operators handling first-purchase retention manually."

The first buyer should be narrow enough that you can find 20 of them and predict where they spend attention.

2. What painful workflow do they repeat?

SaaS retention usually comes from repeated workflow pain, not broad interest. "Customer retention is hard" is too broad. "Every Monday, the operator exports first-purchase customers, segments them manually, and writes follow-up offers in Klaviyo" is a workflow.

3. What trigger makes the pain urgent?

Urgency usually has a trigger: revenue loss, support backlog, compliance risk, manual reporting deadline, churn spike, or a failed campaign. Without a trigger, the buyer may agree the idea is useful and still never act. Ask what happened the last time the problem became painful.

4. What do they use today?

Current behavior is stronger than stated interest. Look for existing software, spreadsheets, agencies, internal scripts, manual work, or deliberate inaction. Doing nothing can still be evidence, but only if you know why: weak pain, no budget, or tools that feel too heavy.

5. Why are current alternatives not good enough?

Competitors are not automatically bad news. They often prove demand. Your job is to identify a repeated gap: price, complexity, missing workflow, weak integrations, poor onboarding, or poor fit for the first buyer.

Review mining helps here. Read recent complaints on places like G2, Capterra, Product Hunt, the Shopify App Store, Reddit, or niche forums. One angry review is noise. A repeated complaint across buyer contexts is a possible wedge.

6. Can you reach the first 20 users?

Before thinking about scalable acquisition, prove you can reach the first 20 users directly. That might mean founder network, targeted outbound, Reddit, X, Indie Hackers, Discord communities, Slack groups, or industry newsletters.

If you cannot name where the first users are, validation is incomplete. A product concept without distribution is still an assumption.

7. What would they pay for?

Willingness to pay should connect to avoided pain or measurable gain. Stronger signals include current spend, time cost, paid pilots, preorders, or repeated pricing-page engagement from qualified traffic. "Sounds useful" is not a pricing signal.

8. Is the MVP narrow enough?

The first MVP should serve one buyer, one painful workflow, and one outcome. Avoid platform scope unless a wider feature is required to test the core workflow. A quick MVP is still too expensive if it tests the wrong scope.

9. What market or timing signal supports the idea?

Look for signals that the pain is becoming more visible now: community discussions, search demand, regulatory or platform changes, new tooling behavior, competitor momentum, or new budget owners. Do not over-rely on TAM claims.

10. What distribution channel is realistic?

Early distribution should match buyer behavior. If the buyer searches when the pain occurs, search content may matter. If the buyer asks peers, communities and founder-led outreach may matter. Good validation includes a way to reach people, not only a product concept.

11. Why are you the right founder for this?

Founder-market fit is practical. It can come from lived pain, unusual access, technical advantage, distribution advantage, or domain credibility. "I can build it" is not enough.

12. What evidence would make you stop?

Write kill criteria before you invest heavily. Examples: five interviews produce no repeated pain, the buyer is not reachable, nobody owns budget, the current workaround is good enough, or the MVP cannot be narrowed to one workflow. Kill criteria protect you from negotiating with your own sunk cost.

A Simple SaaS Validation Workflow

Turn the 12 decisions into a short validation sequence:

flowchart LR

A[Idea] --> B[12 decisions]

B --> C[Evidence gaps]

C --> D[Interviews / research / pricing test]

D --> E[Build / narrow / kill]

Start by writing the assumptions behind the idea. Then score the weakest decisions honestly. If the buyer is fuzzy, do not run a pricing test yet. If the buyer is clear but willingness to pay is weak, do not spend a week polishing a prototype. Run the test that reduces the biggest uncertainty.

Useful tests include problem interviews, review mining, community research, landing-page message tests, pricing-page clicks, preorders, paid pilots, and manual pilots. Manual pilots are especially useful because they can prove the outcome before you automate the workflow. This is the same logic behind early unscalable work: learn directly before you build systems around weak assumptions.

The output should be a decision artifact: what you believe, what evidence supports it, what remains risky, and whether the next move is build, narrow, or kill.

What Evidence Is Strong Enough?

Evidence strength depends on the decision you are testing. Use thresholds as examples, not universal laws.

Strong validation signals:

- People describe the problem without being prompted.

- Multiple buyers tell similar stories about the same workflow.

- Buyers already use spreadsheets, agencies, scripts, software, or manual labor to handle the pain.

- Competitor reviews show repeated complaints from the same buyer type.

- A buyer agrees to a paid pilot, preorder, or serious procurement next step.

- A manual pilot produces the desired result without custom software.

- A focused landing page gets engagement from a known, relevant traffic source.

Weak validation signals:

- Friends say the idea is interesting.

- A general audience joins a waitlist without clear intent.

- Survey respondents say they "would use" the product.

- ChatGPT gives the idea a high score without real-world evidence.

- A broad trend makes the market sound inevitable.

- You can build the product quickly.

Waitlists are not useless. They are just easy to overrate. A waitlist from targeted founder outreach or high-intent search traffic means more than a waitlist from a viral post that attracted curious people outside the buyer group.

Example: How to Validate a SaaS Idea Before Building

Imagine this idea:

An AI onboarding assistant for lean Shopify stores that turns first purchases into repeat customers.

That sounds plausible, but it is still too broad. Shopify stores vary wildly by category, order volume, retention motion, technical maturity, and budget. The validation job is to narrow the buyer and expose the weak assumptions before building.

| Decision area | Example answer | Risk level | Next action |

|---|---|---|---|

| First buyer | Solo Shopify operators with 300-2,000 monthly orders who manage retention without a full CRM owner. | Medium | Interview operators in this order range; exclude agencies and larger teams. |

| Painful workflow | Exporting first-purchase customers, segmenting them, and writing follow-up offers manually. | Medium | Ask for the last three retention campaigns and where the work broke down. |

| Urgency trigger | Repeat purchase rate drops after a paid acquisition push. | Medium | Look for stories tied to wasted ad spend or low second-order rate. |

| Current alternative | Klaviyo templates, spreadsheets, freelancer help, or doing nothing after the first purchase. | Low | Compare where the manual process fails against existing tools. |

| Alternative gap | Existing tools feel powerful but require campaign strategy the solo operator lacks. | Medium | Mine Shopify App Store and community complaints for repeated setup or strategy gaps. |

| First 20 users | Shopify founder communities, ecommerce Slack groups, targeted outbound to stores with visible repeat-purchase offers. | Medium | Build a list of 50 qualified stores before any product work. |

| Willingness to pay | Possible if framed around repeat revenue, but not proven. | High | Offer a paid manual onboarding audit before software. |

| MVP boundary | One assistant that drafts the first repeat-purchase sequence from store and order context. | Medium | Avoid full lifecycle automation, analytics, and campaign calendar scope. |

The verdict is narrow, not build.

The buyer is becoming clearer, and the pain could be real, but willingness to pay and founder access are still unproven. The next test should not be a product sprint. It should be eight to ten interviews with narrowly qualified Shopify operators plus a paid manual pilot: "I'll review your first-purchase follow-up flow and produce three repeat-purchase campaigns for $X."

If nobody wants the manual outcome, the software should not be built. If several operators pay or seriously engage, the first MVP becomes much sharper.

Common Mistakes Founders Make When Validating SaaS Ideas

Validating the solution instead of the problem. Asking "Would you use this?" usually gets politeness. Ask about the last time the workflow happened, what they did, and what it cost.

Calling a broad audience an ICP. "B2B SaaS companies" is not an ICP. A narrow first buyer makes interviews, messaging, pricing, and distribution easier to test.

Treating compliments as willingness to pay. Compliments are cheap. Payment, current spend, a paid pilot, or a real procurement step means more.

Asking ChatGPT for a verdict and calling it validation. AI can structure assumptions, generate interview questions, and summarize research. It cannot prove buyer urgency or willingness to pay.

Building a full MVP before proving the core workflow. If the workflow can be tested manually, test it manually first.

Ignoring founder fit and distribution. A good idea can still be a bad idea for you if you cannot reach buyers or learn the market faster than others.

Failing to define kill criteria. Without stop rules, every weak signal becomes a reason to build one more feature.

When to Build, Narrow, or Kill the Idea

Use this decision matrix after the first validation pass.

| Verdict | Use when | Next action |

|---|---|---|

| Build | Buyer, pain, current alternative, reach, pricing, and MVP boundary are all credible. | Build the narrowest version that tests one workflow. |

| Narrow | Pain exists, but buyer, wedge, pricing, or distribution is still fuzzy. | Tighten the audience or run one more focused validation test. |

| Kill | Pain is weak, buyer is unreachable, alternatives are good enough, or pricing cannot work. | Save the idea artifact and move to a stronger candidate. |

Most early SaaS ideas should land in narrow. That is not failure. It is the point of validation. Narrowing preserves momentum while reducing the odds that you build the wrong thing well.

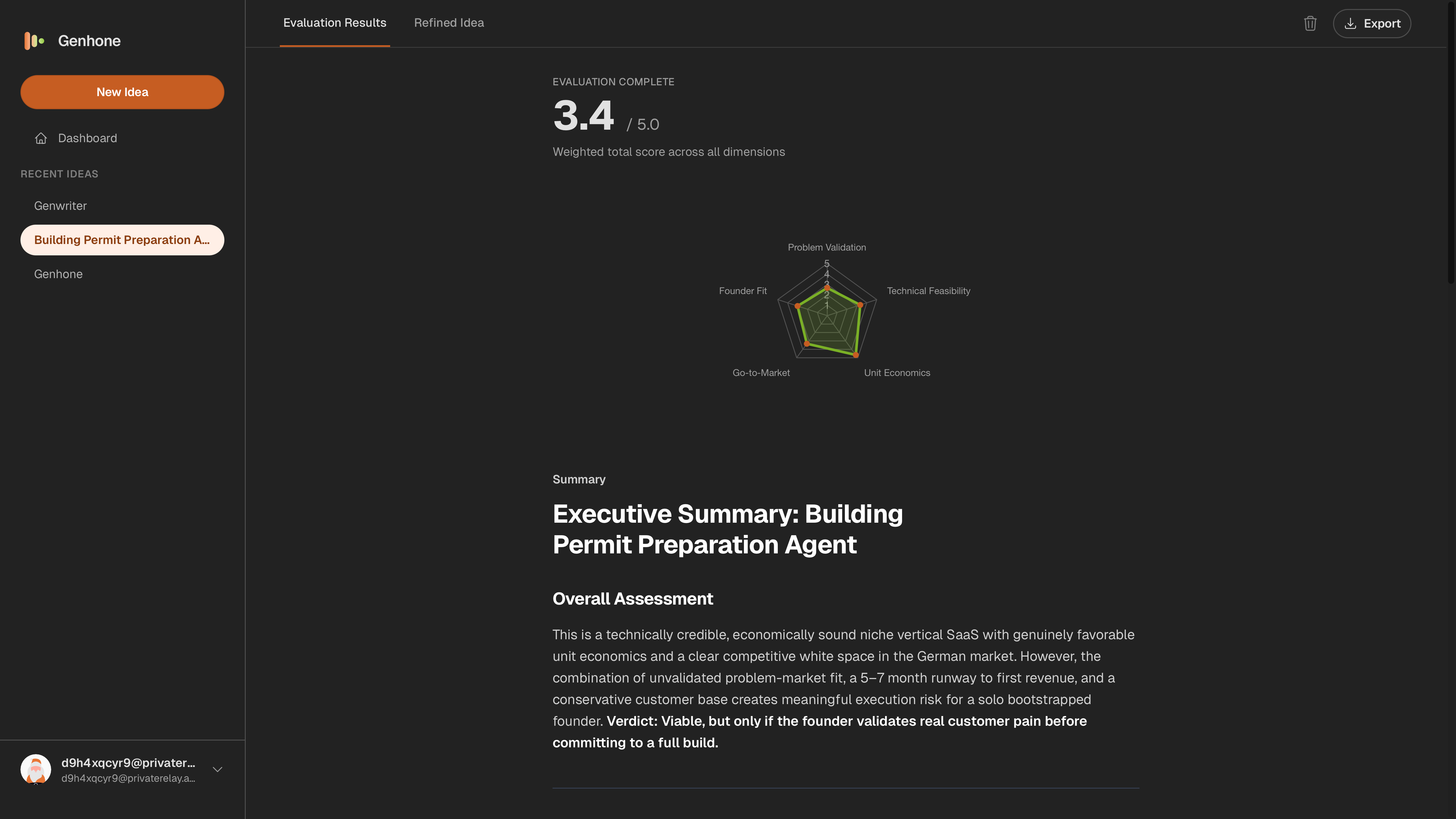

How Genhone Fits Into This Workflow

Genhone gives solo founders a structured refinement workflow for scored idea artifacts before they commit engineering time.

Instead of leaving you in a blank chat, Genhone guides the idea through 12 refinement sections, then evaluates it across automated criteria and founder-fit checks. The output is not just a score. It is a saved artifact you can compare against other ideas, revisit after new evidence, and use to decide whether the next move is build, narrow, or kill.

That makes Genhone most useful before the product sprint begins: when the idea still needs sharper buyer definition, stronger pain evidence, pricing logic, MVP boundaries, and distribution realism.

FAQ

How long does it take to validate a SaaS idea?

A focused first pass can take a few days. Deeper validation often takes two to four weeks depending on interview access, pricing tests, traffic quality, and how narrow the buyer is.

How many interviews do I need?

Five to ten conversations can reveal repeated patterns if the audience is narrow. More interviews may be needed if the answers are inconsistent or if you are still mixing buyer types.

Can I validate a SaaS idea without a landing page?

Yes. Interviews, community research, competitor reviews, and manual pilots can validate core pain before a landing page. A landing page is more useful for testing messaging, pricing intent, and traffic quality.

Is a competitor a bad sign?

No. Competitors often prove demand. The question is whether users have a repeated complaint or underserved segment you can serve better.

Can ChatGPT validate my SaaS idea?

ChatGPT can help structure assumptions, draft interview questions, and organize research. It cannot prove willingness to pay or buyer urgency. Treat it as a thinking aid, not validation.

Should I validate before writing code?

Yes. Faster development makes validation more important, not less. Validate the buyer, pain, pricing, distribution, and MVP boundary before you commit engineering time.

Sources and Examples

- YC's Essential Startup Advice

- Do Things That Don't Scale

- Community and review examples: r/SaaS, Indie Hackers, Product Hunt, G2, Capterra, and the Shopify App Store