SaaS Competitor Analysis Before MVP

A pre-build framework for finding the market gap, pricing anchor, and MVP boundary before you write code.

To run SaaS competitor analysis before an MVP, identify direct competitors, indirect alternatives, and the status quo; study who they serve, what buyers complain about, how they price and package the product, where they acquire customers, and which features appear in every solution. Use the research to define one underserved buyer, one painful workflow, one defensible wedge, and the smallest MVP that tests that wedge. Do not copy the competitor feature list.

SaaS competitor analysis before MVP is not a spreadsheet ritual. It is a decision tool for founders who need to know whether a market has real alternatives, where buyers are still dissatisfied, and what the first product should exclude.

After shipping multiple apps and using AI-assisted development early, the painful pattern is clear: it is now easy to build a polished MVP before you understand the alternatives buyers already tolerate.

What SaaS Competitor Analysis Means Before MVP

SaaS competitor analysis before MVP is the process of studying current alternatives, direct competitors, indirect substitutes, pricing, positioning, buyer complaints, distribution, and product boundaries before building. The goal is not to prove the idea is unique. The goal is to decide whether demand exists, where the opening is, and what the smallest credible MVP should test.

Mature SaaS competitive analysis asks how to win a market you are already in. Pre-MVP competitor analysis asks whether there is a narrow enough opening worth building into.

That distinction matters. Mature SaaS teams use competitive analysis for sales enablement, roadmap planning, market positioning, win/loss work, and campaign strategy. A pre-MVP founder uses it to choose the buyer, wedge, package, first channel, and build boundary.

A competitor can be evidence of demand. If people already pay, complain, switch, or tolerate workarounds, the problem may have weight. Zero competitors can mean hidden opportunity, but it can also mean weak pain, no budget, poor distribution, or a non-software workaround that is good enough.

If you are testing the whole business case, use the broader guide to validate a SaaS idea before building. Here, the job is narrower: use competitor evidence to avoid building the wrong first version.

Do Not Build a Feature Spreadsheet and Call It Strategy

A feature matrix is useful only when it changes a decision. If the output is a long list of competitor features, the usual next step is a me-too MVP that tries to look complete instead of proving a wedge.

The better question is why competitors win, where buyers remain dissatisfied, what buyers currently tolerate, and what the first product must not include. Before an MVP, competitor analysis should answer one decision at a time:

- Is there real demand?

- Which buyer is underserved?

- Which workflow is painful?

- Which alternative is good enough?

- Which price and package are plausible?

- Which first channel is realistic?

- What should the MVP exclude?

AI-generated competitor reports can organize hypotheses, summarize public pages, and create first-pass matrices. They cannot verify buyer urgency, review authenticity, willingness to pay, or switching behavior by themselves. Founders still need to inspect public evidence and buyer language directly.

| Weak competitor analysis | Better pre-MVP question | Decision it should affect |

|---|---|---|

| List every competitor feature. | Which features are table stakes for the first buyer, and which are distractions? | MVP scope. |

| Count competitors and panic. | Are there 3-10 relevant alternatives with visible weaknesses? | Build, narrow, or stop. |

| Copy competitor pricing. | What price anchors and current spend does the buyer already understand? | Pricing hypothesis. |

| Save screenshots of homepages. | Which buyer, outcome, and promise does each competitor emphasize? | Positioning. |

| Read one negative review. | Which complaints repeat across the same ICP or workflow? | Differentiation wedge. |

| Ask AI if the market is saturated. | Which competitors prove demand, and which make the wedge too crowded? | Idea selection. |

The rule is simple: if the research does not change buyer, scope, price, channel, or stop criteria, it is probably documentation, not strategy.

The Pre-MVP Competitor Evidence Map

Use this map to turn competitor research into a build, narrow, retest, or stop decision. It is not linear homework. Fill each layer with enough evidence to decide what to do next.

| Evidence layer | Question to answer | Where to look | Strong signal | Weak signal | Decision if weak |

|---|---|---|---|---|---|

| 1. Buyer match | Do competitors serve the buyer you want to serve? | Homepages, case studies, reviews, testimonials, LinkedIn, app stores. | Several competitors sell to a similar buyer, but none owns your narrow segment. | Competitors serve totally different buyers, or your buyer never appears. | Redefine ICP before MVP. |

| 2. Current alternative | What does the buyer use today? | Interviews, reviews, Reddit, G2, Capterra, Product Hunt, niche marketplaces, YouTube walkthroughs. | Buyer already pays, works around, or tolerates a known cost. | No workaround, spend, or repeated complaint is visible. | Retest pain or stop. |

| 3. Complaint pattern | What repeated frustration creates the opening? | 1-3 star reviews, Reddit threads, support forums, X posts, customer comments. | Same ICP repeats the same complaint across multiple sources. | Isolated complaint or vague "too expensive" feedback. | Narrow the wedge or interview buyers. |

| 4. Table-stakes feature | What must exist for credibility? | Competitor onboarding, docs, public demos, pricing pages. | A small set of features appears in every serious product and supports the core workflow. | Feature list grows into platform scope. | Shrink MVP to the first workflow. |

| 5. Differentiator | What can you do meaningfully differently? | Positioning pages, review gaps, founder expertise, workflow teardowns. | Clear underserved segment, workflow, simplicity, speed, trust, integration, or pricing angle. | "Better UX" or "AI-powered" without a buyer-specific reason. | Rework positioning. |

| 6. Pricing anchor | What does the buyer already compare this to? | Pricing pages, review comments, procurement pages, public plans, current-spend interviews. | Price range maps to existing spend, saved time, avoided risk, or revenue impact. | Pricing copied from competitors without value logic. | Validate pricing separately. |

| 7. Distribution signal | Where do competitors acquire attention? | Organic keywords, communities, ads, integrations, marketplaces, comparison pages. | A reachable channel exists for the first 20 qualified users. | Competitors rely on channels the founder cannot access yet. | Pick a narrower channel or buyer. |

| 8. Saturation risk | Is the wedge defensible for a solo founder? | Search results, ad density, funding, team size, review volume, launch frequency. | There is demand plus a specific gap incumbents ignore. | Market has no demand or is dominated by many funded, fast-moving products. | Choose a subsegment or another idea. |

| 9. MVP boundary | What is the smallest version that tests the gap? | Evidence map synthesis, interviews, competitor workflows. | MVP covers one buyer, one workflow, one outcome, and one differentiator. | MVP must match broad competitor platforms to be credible. | Narrow or stop. |

Each weak signal should change the idea, not add more features. If buyer match is weak, define the ICP for a SaaS idea before building. If pricing anchors are weak, pricing needs a separate test. If distribution is weak, the problem may be real but unreachable for the founder right now.

Keep review mining and competitor teardowns tied to the ICP. Complaints from enterprise buyers may not matter for an SMB wedge. SMB complaints may not matter for a compliance-heavy enterprise product. The strongest output is a written decision: build, narrow, retest, or stop.

Start With Competitor Types, Not Competitor Names

Founders often search only for products that match their idea wording. That misses the real current alternative. A buyer may already solve the problem with a spreadsheet, agency, manual process, internal script, marketplace service, or deliberate inaction.

"No direct competitors" should trigger a broader alternatives search, not immediate confidence.

| Competitor type | What it means | Example for a Shopify retention SaaS | Why it matters before MVP |

|---|---|---|---|

| Direct competitor | Same buyer, similar product, similar job. | Shopify retention or lifecycle marketing apps. | Shows table-stakes workflows and buyer expectations. |

| Indirect competitor | Same problem, different product category. | Email platforms, loyalty tools, customer analytics tools. | Reveals alternative budgets and positioning anchors. |

| Substitute | Non-software or service-based way to solve the problem. | Freelancer, agency, manual CSV analysis, spreadsheets. | Proves current spend or manual cost. |

| Status quo | The buyer lives with the pain or uses a rough workaround. | Doing no retention analysis beyond default email flows. | Shows whether pain is urgent enough to change. |

| Adjacent competitor | Similar buyer, adjacent workflow. | Upsell, review, segmentation, or customer support apps. | Shows channels, packaging norms, and cross-sell expectations. |

| Aspirational competitor | Larger player whose strategy reveals market direction. | Klaviyo or Shopify-native ecosystem leaders. | Useful for context, but dangerous to copy for MVP scope. |

Aspirational competitors are especially dangerous. They can explain category direction, but they should not define the first product. A solo founder does not need to copy the roadmap of a market leader to test one buyer, one workflow, and one outcome.

What to Analyze Before You Build

Scope competitor research to one buyer and one product hypothesis. A category-wide audit creates too much noise. A narrow audit can reveal whether the first buyer already pays, complains, searches, switches, or works around the pain.

| Dimension | What to collect | Useful free or low-cost sources | Decision it informs | Trap to avoid |

|---|---|---|---|---|

| Buyer and segment | Who competitors speak to, case studies, testimonials, review authors. | Homepages, customer pages, LinkedIn, G2, Capterra, Product Hunt, app marketplaces. | ICP and first buyer. | Treating the whole category as one audience. |

| Positioning | H1s, subheads, CTAs, category labels, comparison claims, "for X" language. | Competitor sites, ads, comparison pages, launch pages. | Differentiation and value proposition. | Copying phrases without understanding the buyer. |

| Product workflow | Onboarding, core user journey, required inputs, output, integrations. | Free trials, demos, docs, YouTube, help centers. | MVP scope and solution mechanics. | Mistaking every visible feature for table stakes. |

| Pricing and packaging | Price points, billing model, tiers, free trial, freemium, usage limits, upgrade triggers. | Pricing pages, terms, reviews, archived pages. | Business model and pricing hypothesis. | Copying price before validating value. |

| Complaints and praise | Repeated negative and positive review themes. | G2, Capterra, Product Hunt, Shopify App Store, Chrome Web Store, Reddit, X. | Alternative gap and wedge. | Overweighting one loud complaint. |

| Distribution | SEO pages, community presence, marketplaces, integrations, ads, partnerships, content. | Google results, Similarweb free views, Meta Ad Library, BuiltWith, newsletters, communities. | Go-to-market channel and first-user access. | Choosing a channel because competitors use it at a later stage. |

| Traction and saturation | Review volume, update cadence, funding, hiring, ad activity, community mentions. | Product Hunt, review sites, Crunchbase pages, LinkedIn, Meta Ad Library, search results, Google Trends. | Market timing and saturation risk. | Treating funding as proof that a bootstrapped wedge is easy. |

| Trust requirements | Security pages, SOC2/GDPR claims, onboarding promises, implementation support, support complaints. | Security pages, docs, reviews, sales pages, Wappalyzer. | MVP credibility and sales-cycle complexity. | Building a product that needs enterprise trust before the founder can support it. |

Use public free trials ethically. Stick to public onboarding, public docs, published demos, review sites, and your own buyer conversations. Do not misrepresent yourself to sales teams or seek non-public information.

Save screenshots and URLs only when they support a decision. A notes doc or small spreadsheet is enough. The artifact that matters is the decision map, not the research pile.

Mine Reviews for the Wedge, Not for a Complaint Wall

Reviews are strongest when filtered by buyer type, use case, company size, plan, and workflow. Negative reviews reveal friction. Positive reviews reveal what buyers refuse to lose.

Look for patterns, not isolated gripes. "Too complex" can imply a simplicity wedge. "Too expensive" can imply the wrong segment, packaging gap, or value mismatch. "Missing integration" can imply a workflow gap. "Support is slow" can imply trust or service expectations. "Hard to set up" can imply onboarding or done-for-you opportunity.

Do not turn every complaint into a feature request. The wedge should be specific enough to write as an "Unlike [alternative]..." sentence.

| Review pattern | What it might mean | Pre-MVP action |

|---|---|---|

| Same buyer says setup is too hard. | Simplicity or guided onboarding wedge. | Test a manual onboarding or narrower workflow. |

| Many users complain about missing one integration. | Workflow gap for a specific tool ecosystem. | Validate whether that ecosystem is the first ICP. |

| Users love the product but hate the price jump. | Packaging or SMB segment gap. | Test a narrower self-serve package. |

| Reviews praise one core feature repeatedly. | Table-stakes capability or value anchor. | Include the minimum credible version if it supports the wedge. |

| Buyers complain about reporting or visibility. | Output or proof gap. | Validate whether a report/audit MVP can test demand. |

| Complaints come only from enterprise users. | May not matter for a solo-founder SMB wedge. | Keep segment boundaries clear. |

If you cannot tie the complaint to the first buyer, do not put it in the MVP.

Separate Table Stakes From MVP Scope

Table stakes are credibility requirements for the target buyer and workflow. MVP scope is the smallest version that tests the wedge. Those are not the same thing.

Differentiators are not always features. They can be segment, workflow, onboarding, pricing, integration, trust, speed, support, or packaging. Classify each finding before it becomes scope:

- must-have to test the workflow

- must-have for credibility

- differentiator

- later feature

- distraction

| Feature finding | Classification question | MVP implication |

|---|---|---|

| Every competitor has it and buyers mention it as basic. | Is this required for the first workflow to be credible? | Include the smallest viable version. |

| One competitor has it and markets it heavily. | Does your first buyer care? | Usually defer unless interviews confirm. |

| Reviewers complain it is missing. | Are those reviewers your ICP? | Include only if it supports the wedge. |

| It enables the differentiator. | Can the MVP prove the unique promise without it? | Include or test manually. |

| It exists only in enterprise plans. | Does the first buyer need enterprise-grade capability? | Defer for a self-serve MVP. |

| It makes the product look complete. | Does it change the validation result? | Cut it. |

If the feature list is already growing, use the broader SaaS idea validation checklist to force a build, narrow, or stop decision.

Use Competitor Pricing as an Anchor, Not an Answer

Competitor pricing shows what the market has already been taught to expect. It does not prove your price, because your buyer, package, trust, value metric, support burden, and segment may differ.

Collect the pricing model, starting price, tiers, free trial or freemium offer, value metric, upgrade trigger, annual discount, usage limits, and implementation requirements. Then compare those findings against the current alternative, not only direct competitors.

If competitors are expensive and buyers tolerate them, the pain may be valuable. If competitors are cheap and reviews still complain, the problem may be low-value, the package may be confusing, or buyers may be in the wrong segment.

For a pre-MVP founder, the useful question is not "What do competitors charge?" It is "What does this buyer already compare the problem to?" That comparison may be another SaaS product, contractor time, agency fees, internal labor, lost revenue, or risk.

Competitor pricing is only one input. Use the pricing validation guide to validate SaaS pricing before launch before treating a price point as validated.

Use Competitor Distribution to Find Your First 20 Users

The question is not "What channel is scalable?" The question is "Where can this founder reach the first 20 qualified buyers?"

Competitor distribution signals include SEO pages, target keywords, marketplace listings, integrations, communities, comparison pages, Product Hunt launches, paid ads, affiliate or partner pages, founder-led social, public templates, and calculators. A channel can be proven but unusable for a solo founder right now.

| Competitor channel signal | What it suggests | Founder test |

|---|---|---|

| Competitors rank for long-tail problem keywords. | Search intent exists. | Publish one focused problem page or manually contact search communities. |

| Competitors depend on paid ads. | Commercial intent may exist, but CAC may be high. | Use ads only as small-message tests or avoid until economics are clearer. |

| Competitors sell through a marketplace. | Buyer searches inside an ecosystem. | Study marketplace reviews and test a listing or ecosystem community. |

| Competitors have many integrations. | Workflow depends on existing tools. | Pick one integration ecosystem as the wedge. |

| Competitors are active in niche communities. | Buyer can be reached directly. | Join, observe language, and conduct problem interviews. |

| Competitors have comparison pages. | Alternative-aware traffic exists. | Save for later unless the MVP has a credible switching story. |

Use competitor distribution to identify reachable early channels, not to copy a scaled growth engine. If the only visible channels are enterprise sales, high-budget paid acquisition, or established partner networks, the first buyer or wedge may need to narrow.

For Genhone's own audience, that often means community and founder channels such as Reddit r/SaaS and Indie Hackers before paid acquisition or scaled SEO.

Decide Whether to Build, Narrow, or Stop

Competitor analysis should end in a written decision, not a folder of screenshots. The best early outcome is often "narrow," not "build." A crowded market can still be attractive if the wedge is specific. A novel idea can still be weak if no buyer uses an alternative or feels urgency.

Write decision criteria before opening Cursor, Lovable, Bolt, Claude Code, or a starter template.

| Outcome | Evidence pattern | Next move |

|---|---|---|

| Build | The buyer is specific, current alternatives prove demand, repeated complaints expose a gap, pricing anchors make sense, first channel is reachable, and MVP can test one workflow. | Build the narrowest MVP that tests the wedge. |

| Narrow | Demand exists, but the buyer, workflow, feature boundary, package, price, or channel is too broad. | Rewrite the idea around one segment, one alternative, and one painful workflow. |

| Retest | Evidence is mixed or comes from the wrong buyer. | Run 5-10 targeted interviews, a manual pilot, pricing conversation, or channel test. |

| Stop | No current alternative, no repeated pain, no reachable buyer, no plausible pricing, or the wedge requires matching mature platforms. | Save the artifact and move to a stronger idea. |

This is where competitor analysis connects to customer validation. Competitor research can suggest the wedge. Interviews, pricing tests, manual pilots, and buyer behavior decide whether the wedge deserves an MVP.

Worked Example: Shopify Retention SaaS

Hypothetical idea: a SaaS tool that helps solo Shopify operators find missed repeat-purchase opportunities and send simple retention actions without hiring a lifecycle marketer.

| Evidence layer | Weak version | Stronger pre-MVP finding | Decision |

|---|---|---|---|

| Buyer | Shopify stores. | Solo Shopify operators with 300-2,000 monthly orders and no lifecycle marketer. | Narrow ICP. |

| Current alternatives | Email tools. | Klaviyo templates, manual CSV exports, spreadsheets, freelancer audits, or doing nothing. | Study current workflow before features. |

| Competitors | "Klaviyo exists, so this is impossible." | Larger tools prove demand but may be too broad for operators who need a lightweight retention audit. | Look for simplicity or workflow wedge. |

| Complaint pattern | "Too expensive." | Repeated complaints about setup complexity, knowing what to send, or reporting missed repeat revenue. | Wedge around guided retention opportunities, not full email automation. |

| Table stakes | Dashboard, email sending, analytics, templates, segmentation. | For MVP, maybe a manual retention audit plus opportunity report is enough to test payment. | Do not build full email platform. |

| Pricing anchor | $29/month because cheap feels safer. | Compare against current email tool spend, freelancer audit cost, and value of recovered repeat purchases. | Run pricing validation. |

| Distribution | SEO later. | Shopify communities, app store review mining, niche ecommerce operator groups, direct outreach to stores. | Build first-user list. |

| MVP boundary | AI retention platform. | Manual or semi-automated retention opportunity report for one store segment. | Test one workflow. |

| Decision | Build the whole app. | Narrow and run 5-10 interviews plus 3 paid manual audits before building software. | Retest before MVP. |

The competitor analysis does not validate this idea by itself. It reveals what to investigate next: the first ICP, the retention workflow, pricing logic, and whether a manual offer can create payment behavior before software exists.

The stronger MVP may be a manual audit or report. The founder could interview 5-10 matching operators, inspect current retention workflows, compare current email-tool spend, and offer 3 paid manual audits. If operators do not recognize missed repeat purchases, cannot explain current workarounds, or will not pay for a manual version, building a polished retention app is early.

The same example depends on the ICP and price. Use the ICP guide to define the ICP for a SaaS idea, then use the pricing guide to validate SaaS pricing before launch before turning the idea into an MVP spec.

How Genhone Fits Into Competitor Analysis

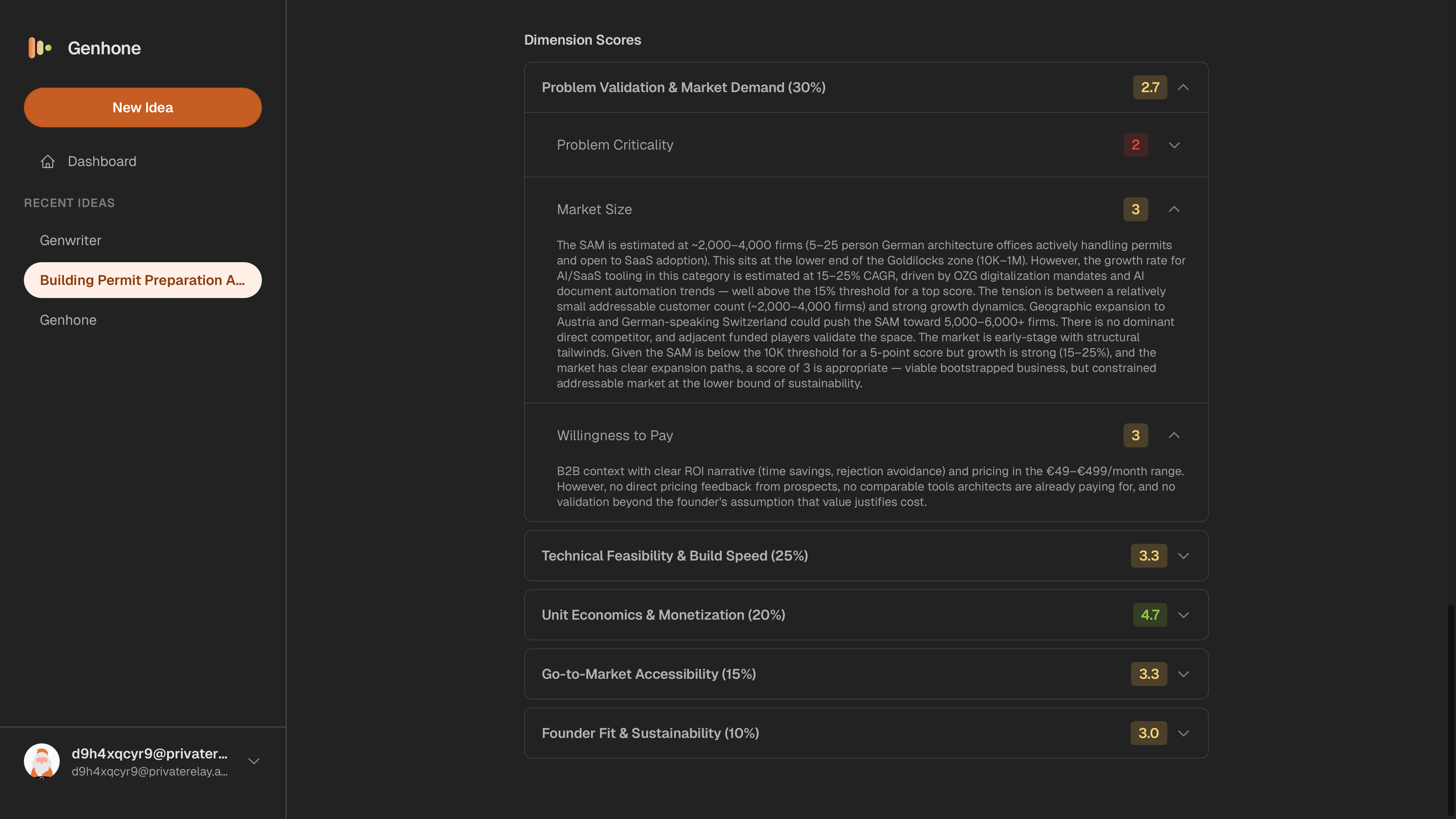

Genhone is not a standalone competitor intelligence platform. It helps founders structure the assumptions competitor analysis should inform: Customer Definition, Problem Definition, Solution Mechanics, Value Proposition, Business Model, Go-to-Market Approach, Scope and Boundaries, and Solo Founder Execution.

The refinement workflow forces the founder to name current alternatives and articulate positioning against them. Genhone's evaluation model also includes competitive landscape assessment as one scored criterion, including competitor count, competitor weaknesses, saturation, and funded-competitor risk.

Saved idea artifacts and idea comparison make competitor evidence useful across multiple possible SaaS ideas. That matters when one idea has a crowded but reachable wedge and another looks novel but has no visible alternatives or buying behavior.

For AI-assisted founders, the competitor evidence map should exist before writing a build prompt or PRD. If AI is part of your build process, read how to validate your app idea before building with AI, then use a structured SaaS idea refinement workflow to turn competitor evidence into saved assumptions.

Turn competitor research into a structured SaaS idea artifact before you build.

Common SaaS Competitor Analysis Mistakes Before MVP

The common mistakes are predictable: treating no competitors as automatic validation, copying features from incumbents, confusing competitor research with customer validation, researching the whole category instead of one ICP, treating AI-generated market scans as verified evidence, ignoring indirect alternatives and the status quo, looking only at homepages, overweighting enterprise competitor needs for a self-serve MVP, mistaking "gap" for "buyers will pay," and building the MVP before writing stop criteria.

Competitor research can prove that a market exists. It cannot prove that your buyer will switch, pay, or trust you. Those require interviews, pricing tests, manual pilots, or other buyer behavior.

For AI-assisted builders, this should happen before the first build session. Use the questions before vibe coding an app if competitor analysis has created a tempting but still fuzzy product scope.

FAQ

How do you do competitor analysis for a SaaS MVP?

Identify direct competitors, indirect alternatives, substitutes, and the status quo. Analyze buyer segment, positioning, product workflow, pricing, complaints, distribution, and saturation. Then decide the underserved buyer, wedge, and smallest MVP that tests the opportunity.

Should I build a SaaS if competitors already exist?

Competitors can be a positive sign because they show demand and budget. Build only if you can identify a specific buyer, repeated complaint, reachable channel, pricing logic, and narrow wedge. Do not build a weaker copy of an established product.

Is no competition good for a SaaS idea?

Not automatically. No visible competitors can mean hidden opportunity, but it can also mean weak pain, no budget, poor distribution, or a non-software workaround. Search for substitutes and status quo behavior before treating novelty as validation.

What should I include in a SaaS competitor analysis?

Include direct and indirect competitors, current alternatives, target buyers, positioning, feature table stakes, pricing and packaging, review complaints, distribution channels, trust requirements, market saturation signals, and MVP implications.

How many competitors should I analyze before MVP?

Start with 5-10 relevant alternatives: 2-4 direct competitors, 2-3 indirect alternatives, 1-2 substitutes or status quo workflows, and 1-2 aspirational competitors for market context. Depth matters more than a giant list.

Can AI do SaaS competitor analysis for me?

AI can speed up discovery, summarization, matrix creation, and question generation. It cannot verify buyer urgency, review authenticity, willingness to pay, or switching behavior by itself. Treat AI output as hypotheses and inspect sources directly.

Should competitor analysis happen before or after customer interviews?

Do a quick scan before interviews so you understand alternatives and can ask better questions. Then use interviews to test whether those alternatives matter to the specific buyer. After interviews, refine the competitor map around the real workflow and buyer language.