Questions to Answer Before Vibe Coding an App

A build-readiness question gate for founders using Cursor, Lovable, Bolt, v0, Replit, Claude Code, or Codex.

Before vibe coding an app, answer who the first buyer is, what painful workflow repeats, what they use today, what evidence proves they care, why they would pay or switch, where the first users will come from, what the smallest useful version should do, what AI should not build yet, and what evidence would make you narrow or stop.

Use these questions before the first AI build prompt so the first build tests a real assumption instead of turning a vague idea into polished screens.

After shipping multiple LLM-assisted apps, I treat the first prompt as a commitment point: fuzzy assumptions usually become polished fuzziness.

Quick Answer: 10 Questions Before You Vibe Code an App

This is a question gate, not a guarantee. A weak answer does not mean the idea is bad. It means the first build prompt is early.

| Question | Weak answer | Stronger answer | If your answer is weak |

|---|---|---|---|

| Who is the first buyer? | "Founders," "busy people," or "small businesses." | A role, context, and trigger moment. | Narrow the audience before prompting AI. |

| What painful workflow repeats? | "It would be useful." | A recurring task tied to time, money, risk, stress, or revenue. | Ask for last-time stories before building. |

| What do they use today? | "Nothing exists." | A current workaround, tool, spreadsheet, agency, manual process, or deliberate inaction. | Research current behavior and alternatives. |

| What evidence proves they care? | Friends like the idea. | Interviews, current spend, repeated complaints, manual work, or qualified demand. | Collect evidence from the target buyer. |

| Why would they pay or switch? | "It saves time." | A clear cost, revenue gain, risk reduction, or switching reason. | Run a pricing or manual service test. |

| Where will the first 20 users come from? | "Product Hunt" or "social media." | A reachable list, niche community, search query, marketplace, or outbound segment. | Build the user list before the app. |

| What is the smallest useful version? | A full dashboard, AI chat, integrations, and analytics. | One buyer, one workflow, one outcome. | Cut scope until the first version tests one assumption. |

| What should AI not build yet? | No non-goals. | Explicit exclusions for features, personas, integrations, and edge cases. | Write non-goals before the first prompt. |

| What would make you narrow or stop? | No stop rule. | Written conditions that invalidate buyer, pain, pricing, channel, or scope. | Define stop criteria before momentum starts. |

| What should the first AI prompt do? | "Build the app." | Ask AI to plan or implement the smallest testable slice from the validated answers. | Start with planning and missing-question detection, not implementation. |

For the fuller version, use our guide to validating an app idea before building with AI.

Turn your rough app idea into a structured validation artifact before your first AI build prompt.

Why These Questions Matter More When You Can Build Fast

Vibe coding compresses the gap between idea and working prototype. You can generate screens, flows, and code before the buyer, pain, pricing, or distribution question is clear.

That speed is useful, but visual progress can make weak assumptions feel real. A working dashboard does not prove a buyer exists. A finished integration does not prove anyone will pay or switch.

The question is not "Can I build this?" It is: "What assumption should this build test?"

If the app is meant to become a SaaS business, use the broader framework for validating a SaaS idea before building. Here, the question is what must be true before your first AI-assisted build session deserves the next hour or weekend.

Y Combinator's startup advice still centers talking to users and learning before scaling.

Business Questions Are Not the Same as Prompt Questions

Business questions decide whether the app deserves a build and what the build should test. Prompt questions decide how to instruct AI once the business question is clear.

Vibe-coding advice often combines business readiness, PRDs, prompts, setup, deployment, and security. Those matter, but answer different questions. A better prompt can still encode the wrong buyer.

| Question type | Decides | Example | Wrong use |

|---|---|---|---|

| Business question | Whether and what to build. | "Who pays for this and why now?" | Treating a nice prompt as validation. |

| Scope question | What the first version should include or exclude. | "What is the one workflow the MVP must solve?" | Building every plausible feature. |

| Prompt question | How to instruct the AI tool. | "What context should Cursor, Lovable, or Codex receive first?" | Asking AI to invent the strategy. |

| Setup question | Where and how the app will run. | "What stack, hosting, and accounts are required?" | Solving infrastructure before buyer clarity. |

| Security question | Whether the app is safe to show users. | "Are secrets, auth, payments, and data access handled safely?" | Treating launch readiness as market validation. |

Start with business readiness. PRDs, prompts, setup, and security are useful after the core answers exist, but they do not prove buyer demand.

How to Answer the 10 Questions

1. Who is the first buyer?

Name a role, context, and trigger: [role] in [context] when [trigger] happens. "Anyone who wants to be more productive" gives you nowhere to research, price, or distribute. In B2B, separate the user from the budget owner.

2. What painful workflow repeats?

Look for a workflow, not a broad problem category. "Customer support is hard" is weaker than "support leads reassign refund requests every Monday." Ask what happened last time, which tools were open, and what it cost.

3. What do they use today?

Current alternatives are demand evidence: spreadsheets, manual work, agencies, scripts, existing tools, call forwarding, reminders, or deliberate inaction. "No competitors" should trigger more research because buyers usually have a workaround.

4. What evidence proves they care?

Stronger evidence includes buyer interviews, current spend, repeated complaints, manual work, qualified demand, or a paid pilot. Friend feedback, likes, broad surveys, and generic AI scores are weak. AI can produce hypotheses; buyer behavior provides proof.

5. Why would they pay or switch?

Payment logic should connect to money, time, risk, revenue, compliance, or status. "It saves time" is incomplete until you know whose time, how often, and whether the buyer pays for nearby solutions.

6. Where will the first 20 users come from?

Distribution is a validation question. Name a channel that matches the buyer: search, a niche community, marketplace, founder network, outbound list, or existing audience. "Product Hunt" is weak unless your buyer actually shops there.

7. What is the smallest useful version?

The first version should test the riskiest assumption. A strong MVP boundary is one buyer, one workflow, and one promised outcome. Sometimes the smallest useful version is a manual service, spreadsheet, landing page, mock flow, or concierge test.

8. What should AI not build yet?

Non-goals protect the first prompt. Exclude secondary personas, nice-to-have integrations, dashboards, admin panels, analytics, automations, and edge cases unless they test the core risk. Extra features still create surface area and maintenance burden.

9. What would make you narrow or stop?

Write stop criteria before the prototype exists. Useful criteria include no repeated pain in five to ten conversations, no budget owner, no reachable channel, strong alternatives, or an MVP that cannot be narrowed.

10. What should the first AI prompt do?

The first prompt should not automatically be "build the app." Ask AI to identify missing assumptions, convert answers into an MVP boundary, or plan the smallest testable slice. Once building starts, include the buyer, workflow, outcome, workaround, non-goals, and stop criteria.

What Good Answers Look Like

Use red, yellow, and green to decide whether an answer is ready for a build prompt.

| Grade | Meaning | Next move |

|---|---|---|

| Green | The answer is specific and supported by buyer behavior, current spend, repeated evidence, or direct access. | Build only the narrow slice that tests the core workflow. |

| Yellow | The idea seems plausible, but the buyer, evidence, price, channel, or scope is fuzzy. | Narrow the idea or run one focused validation test. |

| Red | The answer is broad, speculative, contradicted by evidence, or impossible to act on. | Do not vibe code yet. Fix the assumption or move to another idea. |

Most early ideas should have several yellow answers. That is normal. Yellow means "run a smaller test," not "add more features." Red pricing or distribution is especially risky because the product still needs someone who can pay and a path to first users.

Example: Before and After the Question Gate

Rough idea:

An AI app that helps local home-service businesses follow up with missed calls.

One validation pass does not prove the idea will work, but it can make the first build prompt less speculative.

| Question | Weak first answer | Stronger answer after one validation pass |

|---|---|---|

| First buyer | "Local businesses." | Owner-operators of 1-5 person plumbing and HVAC shops who miss calls while on jobs. |

| Painful workflow | "They miss leads." | Calls during job hours are returned late, and high-intent customers book another provider. |

| Current alternative | "They use phones." | Voicemail, call forwarding to spouse/admin, missed-call text tools, or no process. |

| Evidence | "Seems obvious." | 8 interviews, 5 describe missed calls as a weekly revenue leak, 3 already pay for call handling. |

| Payment/switching | "They would pay to get leads." | They already value booked jobs at $150-$500+ and pay for ads, call tracking, or admin help. |

| First users | "Google ads." | Direct outreach to 40 shops found through local search and trade groups; founder has access to two operators for workflow review. |

| MVP boundary | "AI receptionist dashboard." | Capture missed call, draft a callback SMS, log status, and remind owner within 10 minutes. |

| Non-goals | None. | No full CRM, no scheduling automation, no voice bot, no payments, no multi-location admin. |

| Stop criteria | None. | Stop if owners say missed calls are rare, existing call tools solve it, or SMS follow-up is not trusted. |

| First AI prompt | "Build the app." | Ask AI to turn the narrowed brief into a one-workflow MVP plan with explicit non-goals and missing assumptions. |

The stronger answers still need proof from usage, pricing tests, and buyer behavior. If most answers are still weak, the next action is research or interviews, not implementation.

Build, Narrow, or Do Not Vibe Code Yet

End the question gate with a decision before momentum starts.

| Verdict | Use when | Next action |

|---|---|---|

| Build | Buyer, pain, current behavior, payment logic, first channel, MVP scope, and non-goals are specific and supported by evidence. | Prompt AI to plan or build the narrowest testable slice. |

| Narrow | Pain seems real, but buyer, price, channel, or MVP scope is still fuzzy. | Tighten the idea and run one focused validation test. |

| Do not vibe code yet | Buyer is broad, pain is weak, no one pays or switches, first users are unreachable, or stop criteria are already met. | Save the idea, fix the broken assumption, or compare it against another idea. |

"Do not vibe code yet" is not a permanent no. It avoids turning unclear assumptions into code. Vibe coding works best when the first build tests something specific.

Idea comparison helps when you have multiple plausible ideas. The strongest one has the clearest buyer, painful workflow, reachable channel, narrow scope, and reason to pay.

Turn your rough app idea into a structured validation artifact before your first AI build prompt.

How Genhone Fits Before the First Prompt

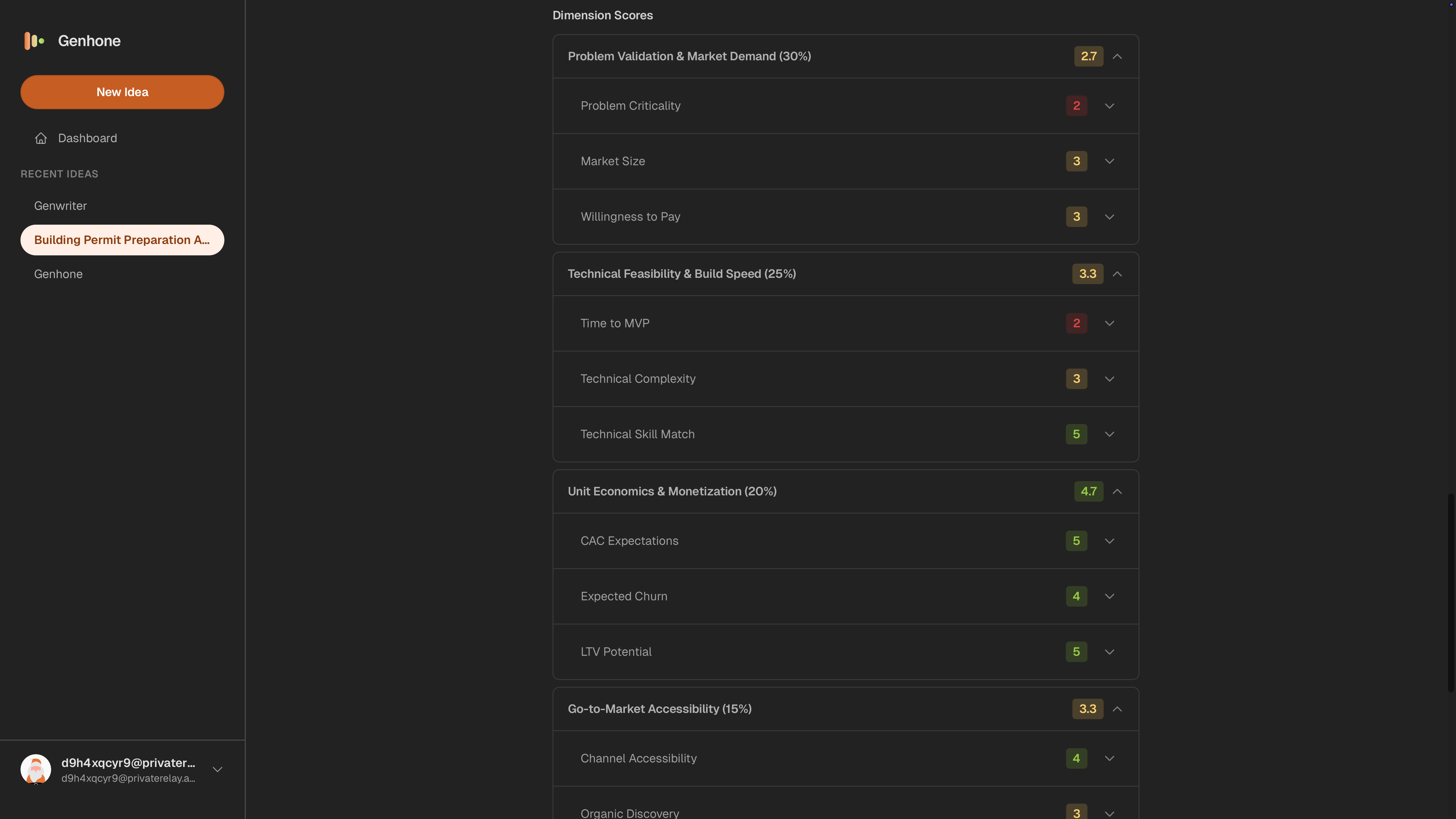

Genhone helps solo founders turn SaaS ideas into structured refinement artifacts before coding through a structured idea validation workflow.

It guides founders through 12 sections, including customer, problem, business model, go-to-market, and scope, then evaluates ideas across automated criteria and founder-fit checks. The result is a saved artifact you can compare before opening an AI coding tool.

Use Genhone when this question gate exposes yellow or red answers. It turns buyer, pain, pricing, distribution, scope, and founder-fit assumptions into something structured enough to judge. For a lighter review, use the SaaS idea validation checklist.

FAQ

What questions should I answer before vibe coding an app?

Answer the first buyer, repeated painful workflow, current alternative, evidence, payment or switching reason, first-user channel, MVP boundary, non-goals, stop criteria, and first AI prompt goal.

Should I write a PRD before vibe coding?

Yes, but only after the core business questions are clear. A PRD specifies what to build; it does not prove the app deserves to be built.

Can ChatGPT validate my app idea before I build it?

ChatGPT can structure assumptions, draft interview questions, summarize research, and identify gaps. It cannot prove urgency, willingness to pay, or distribution without real-world evidence.

How much validation is enough before vibe coding?

Enough to define one buyer, one repeated workflow, one credible reason to pay or switch, one first channel, and one narrow MVP. Five to ten focused buyer conversations can reveal patterns.

What if I can build the app in one weekend?

The build may be cheap, but maintenance, positioning, support, and opportunity cost are not free. Make sure the weekend build tests a real assumption.

What should my first AI coding prompt include?

Include the buyer, workflow, outcome, current workaround, MVP boundary, non-goals, evidence level, and what the AI should flag before implementation.