When to Kill, Narrow, or Keep a SaaS Idea

A pre-build decision framework for founders who need stop criteria before code turns into sunk cost.

Kill a startup idea when the buyer is vague, the pain is weak, no current alternative or payment evidence exists, distribution is unreachable, unit economics cannot work, or the MVP cannot be narrowed to a testable wedge. Narrow the idea when one part is real but the buyer, problem, pricing, channel, or scope is wrong. Keep going only when repeated behavior, current spend or workarounds, reachable buyers, and a small MVP point to the same opportunity.

The hard part is not deciding whether an idea is perfect. It is deciding whether weak evidence means stop, narrow, or run one more small test before you build.

After shipping multiple apps and using AI-assisted development early, I have learned that the expensive mistake is rarely a lack of code. It is keeping a fuzzy idea alive because a prototype was easy to make.

If you still need the broader validation process, start with how to validate a SaaS idea before building. This page is narrower: it helps you interpret the evidence and write the next decision.

What It Means to Kill a Startup Idea Before You Build

Killing a startup idea means stopping investment in the current business thesis. It does not mean the founder is bad, the research was wasted, or every adjacent idea is dead.

For a SaaS founder, an idea can fail at several layers: buyer, problem, urgency, current alternative, willingness to pay, distribution, MVP scope, unit economics, founder fit, and timing. One layer can break the whole thesis. A beautiful solution does not matter if nobody owns the pain. A clear buyer does not matter if the first channel is fantasy. A polished demo does not matter if the product needs enterprise trust before a solo founder can support it.

The required distinction is simple:

Killing an idea means stopping the current thesis. Narrowing means preserving the strongest evidence and changing the weak part. Keeping means the evidence is strong enough to justify the next small test.

A pre-build kill decision is cheaper and cleaner than a post-build shutdown. Before you build, you can save the interviews, competitor notes, buyer language, and pricing lessons without also carrying users, infrastructure, roadmap promises, and emotional attachment to the product surface.

AI-assisted development makes this decision more urgent. Cursor, Lovable, Bolt, Claude Code, v0, and similar tools reduce build friction, but they also make it easier to create product surface area before the business case is coherent. Fast code does not remove the need for a buyer, a painful workflow, a reachable channel, and a price that can work.

Kill, Narrow, or Keep: The Three Useful Verdicts

The practical decision is not only pivot or persevere. That language is useful in lean-startup methodology, but pre-build SaaS ideas often need a more precise middle state.

Use three verdicts:

| Verdict | What it means | Typical evidence | Next action |

|---|---|---|---|

| Kill | Stop investing in the current thesis. | No specific buyer, weak pain, no current alternative, no willingness-to-pay evidence, unreachable buyers, impossible economics, or MVP cannot be narrowed. | Save the learning, choose another idea, or restart from a different problem. |

| Narrow | Preserve the signal, change the weak part. | Pain exists but buyer is broad; buyers complain but will not pay; competitors prove demand but wedge is unclear; channel works for a smaller segment. | Narrow ICP, trigger, workflow, package, price, channel, or MVP scope and retest. |

| Keep | Continue with the next small evidence-gathering step. | Repeated buyer behavior, current spend or workarounds, reachable channel, plausible pricing, and one testable MVP boundary align. | Run a manual pilot, pricing test, landing page, concierge test, or smallest MVP. |

Most early SaaS ideas should land in narrow, not keep. Narrow is useful because it turns a vague product wish into a sharper experiment: one buyer, one workflow, one trigger, one package, one channel, or one MVP boundary.

Keep still does not mean "build everything." It means the evidence supports another small test. The next step should be the smallest thing that could disprove the riskiest assumption.

The goal is not to be ruthless for its own sake. The goal is to stop confusing unresolved assumptions with progress.

The SaaS Idea Decision Ledger

Complete this ledger before writing product code. Treat it as a decision artifact, not a scorecard where every row gets averaged.

| Decision layer | Question to answer | Keep signal | Narrow signal | Kill signal | Evidence to collect next |

|---|---|---|---|---|---|

| 1. Buyer clarity | Can you name the first buyer and the context where they feel the problem? | One role, one situation, and a reachable list of prospects. | Buyer type is plausible but too broad or mixed. | "Everyone," "founders," "small businesses," or no buyer owner. | 5-10 interviews with one narrow ICP. |

| 2. Pain urgency | Does the problem create time, money, risk, revenue, or retention pressure? | Buyers describe recent painful events and consequences. | Pain is real but occasional, low-priority, or tied to another segment. | Nice-to-have language with no consequence. | Last-time story interviews and workflow walkthroughs. |

| 3. Current alternative | What does the buyer use or tolerate today? | Paid tools, manual workarounds, spreadsheets, agencies, employee time, or clear switching pain. | Alternatives exist but are used by a different segment or workflow. | No workaround, no search, no spend, and no visible consequence. | Competitor or review scan plus interview questions about current behavior. |

| 4. Willingness to pay | Is there behavior that points to money, budget, or economic value? | Current spend, paid pilot, preorder, serious sales conversation, or budget owner engagement. | Buyers value the outcome but package, price, or buyer owner is unclear. | Compliments, waitlists, or hypothetical "I would pay" only. | Pricing conversation, fake-door checkout, or paid manual offer. |

| 5. Competitive wedge | Do competitors prove demand while leaving a narrow opening? | Several alternatives exist and repeat complaints reveal an underserved wedge. | Market exists but the wedge is too broad or feature-led. | No competitors and no substitutes, or incumbents solve the problem well enough for the buyer. | Competitor evidence map and review mining. |

| 6. Distribution access | Can you reach the first 20-100 qualified users without fantasy channels? | Specific communities, search queries, marketplaces, lists, partnerships, or founder network access. | A channel exists for a narrower buyer or sharper pain. | "Go viral," broad social posting, paid ads with no budget, or no buyer gathering place. | First-user sourcing plan and channel test. |

| 7. MVP boundary | Can the first version test one buyer, one workflow, and one outcome? | MVP can be simulated or built as a narrow slice. | Product idea has a real core but includes too many workflows or features. | Credibility requires a broad platform, many integrations, or long enterprise trust before any learning. | Scope cut, concierge test, clickable prototype, or manual workflow. |

| 8. Unit economics | Could the price, margin, support load, and acquisition path work for a solo SaaS? | Rough LTV, CAC, price point, churn, and support assumptions are plausible. | Economics may work for a different segment, package, or price. | Price is too low, support too high, market too small, or CAC path too expensive. | Back-of-envelope model and pricing validation. |

| 9. Founder fit | Is the founder suited to test and sustain this idea? | Strong skill, domain, network, interest, and execution fit. | Idea is viable but founder needs narrower scope or a different GTM motion. | Requires capabilities, compliance, sales motion, or support load the founder cannot handle. | Founder-fit reflection and constraint review. |

| 10. Timing and change | Is now a good time for this buyer and workflow? | New regulation, AI capability, platform shift, budget pressure, or market behavior creates urgency. | Timing exists for an adjacent buyer or smaller wedge. | The window has closed, budget has moved away, or the pain no longer exists. | Recent market scan and buyer interviews. |

The rows are connected. A strong competitor signal does not rescue a weak buyer. A clear buyer does not rescue impossible distribution. A good founder fit does not rescue a price point that cannot support the support load.

Do not average your way out of a fatal flaw. If nobody owns the pain or pays for the outcome, strong feature ideas do not matter.

One red signal can be enough when it breaks the business thesis: no buyer, no pain, no payment logic, or impossible distribution. Several yellow signals usually mean the idea deserves a sharper test, not a build. The output should be a written verdict plus a next action: kill the thesis, narrow a specific layer, or keep going with the next smallest evidence-gathering step.

If the buyer row is weak, define the ICP for a SaaS idea before touching scope. If payment logic is weak, validate SaaS pricing before launch. If the competitive wedge is unclear, run SaaS competitor analysis before MVP. For the full artifact, use the SaaS idea validation checklist.

When to Kill a SaaS Idea

Kill the current idea when the failed assumption is structural, not merely untested. Untested means you do not know yet. Structural means repeated evidence points against the thesis or the missing piece is not realistically fixable for this founder and market.

| Hard-kill signal | Why it matters | Do not disguise it as |

|---|---|---|

| No buyer owner | Nobody can decide, pay, or change behavior. | "The market is huge." |

| Weak pain | SaaS subscriptions need recurring value. | "People said it sounds useful." |

| No current alternative | No workaround can mean no urgency or budget. | "There is no competition." |

| No payment logic | Interest without budget rarely converts. | "They joined the waitlist." |

| Unreachable distribution | A good product still needs a path to buyers. | "We will post on X." |

| Impossible MVP | The first version cannot test one assumption cheaply. | "We just need to build the full platform." |

| Broken economics | Price, churn, support, or CAC cannot support the business. | "We will make it up with volume." |

| Bad founder fit | The idea needs a sales, compliance, support, or technical motion the founder cannot sustain. | "I can learn everything while building." |

Structural kill signals include no specific buyer after multiple narrowing attempts, no repeated painful workflow, no current alternative or consequence, only compliments or hypothetical interest, a market too small for recurring revenue at any realistic price, an unreachable first channel, an MVP that cannot be narrowed enough to validate cheaply, enterprise economics without enterprise trust, poor founder fit, or a market change that removed the need.

A kill decision should name the broken assumption. "Kill this idea" is too vague. "Kill this thesis because solo Shopify operators do not describe the retention problem as urgent and no current workaround appears" is useful.

Kill the thesis, not the learning. A clean stop should leave behind notes about the buyer, pain, competitors, channels, and invalidated assumptions.

When to Narrow Instead of Kill

Narrow when evidence points to a real problem but the current framing is too wide, wrong, expensive, or hard to reach. This is the most common useful verdict for early SaaS ideas.

Common narrowing moves include narrowing the ICP from a broad category to one buyer context, narrowing the trigger moment, narrowing the workflow, narrowing the outcome, changing the package, changing the pricing model or value metric, changing the distribution channel, reducing MVP scope, moving from standalone platform to integration or add-on, or replacing automation with manual or concierge validation.

| Weak signal | What it might mean | Narrowing move | Retest with |

|---|---|---|---|

| Buyers like the idea but cannot name a recent event. | Pain is not urgent for this segment. | Narrow to a trigger moment with business cost. | Last-time interviews. |

| Users complain but do not pay. | Pain may be real but buyer or package is wrong. | Target buyers with existing spend or measurable cost. | Current-spend questions and paid manual offer. |

| Competitors are strong but reviews show repeated complaints. | Market exists but wedge must be specific. | Focus on one underserved workflow or segment. | Review mining plus 5-10 interviews. |

| Channel is vague. | Buyer is not reachable enough. | Pick a segment with a named community, marketplace, list, or search behavior. | First 20-user sourcing test. |

| MVP keeps expanding. | Scope is hiding uncertainty. | Cut to one workflow, one outcome, one non-goal list. | Concierge test or clickable prototype. |

| Price feels too low or too high. | Package or value metric may be wrong. | Separate package, value metric, and price range. | Pricing validation conversation. |

| Founder fit is weak. | The idea may require a different execution shape. | Choose a version that fits existing skills, network, and support capacity. | Founder-fit review and build-time estimate. |

A narrowing decision should create a new testable claim. "AI assistant for ecommerce brands" is not narrow. "Retention insight assistant for solo Shopify operators with 300-2,000 monthly orders who use Klaviyo but do not have a lifecycle marketer" is closer because it names buyer, context, current alternative, and workflow.

Narrowing is not rebranding the same vague idea. If the buyer, problem, workflow, price, channel, or MVP boundary did not change, you probably only rewrote the tagline.

A narrow idea should become easier to test. If narrowing makes the idea more complex, you have not narrowed it.

When to Keep Going

Keep going when multiple independent signals point to the same narrow opportunity. The important word is same. Interviews, competitor complaints, current spend, pricing logic, distribution access, MVP scope, and founder fit should converge on one buyer and one workflow.

| Evidence | Minimum useful threshold | Next step |

|---|---|---|

| Buyer interviews | 5-10 qualified buyers repeat the same workflow pain. | Run a manual or concierge test. |

| Current alternative | Buyers already use tools, spreadsheets, agencies, employees, or manual workarounds. | Map the gap and test the switch reason. |

| Payment logic | Buyer can explain current spend, budget owner, or expected ROI. | Test package and price range. |

| Distribution | Founder can name where the first 20 qualified users come from. | Run a channel test. |

| MVP scope | First version fits one buyer, one workflow, one outcome. | Build or simulate only that slice. |

| Founder fit | Founder can build 80 percent with existing skills and sustain support. | Commit to the narrow validation sprint. |

Strong keep signals include the same buyer type repeating the same pain, buyers already spending money or time on the problem, imperfect current alternatives, a plausible price range, a reachable first 20 users, a first version that can be built or simulated as one workflow, and a founder who can realistically build, sell, and support that version.

Keep does not mean certainty. It means the evidence is strong enough to justify the next small validation step: a manual pilot, a paid audit, a pricing test, a landing page, a concierge workflow, or a narrow MVP. The first build should still have stop criteria.

Write Stop Criteria Before You Open an AI Coding Tool

AI coding tools reduce build friction, but they increase the risk of premature commitment. A founder can create screens, auth, billing, dashboards, and onboarding before proving that anyone owns the painful workflow.

The best time to define a stop rule is before the prototype exists, because product work makes weak evidence feel personal.

Good stop criteria are specific, observable, tied to a core assumption, time-bound or test-bound, and decided before emotional investment grows. They should not depend on vanity metrics such as likes, compliments, broad waitlist signups, or unqualified social attention.

| Assumption | Stop or narrow criterion | Decision if triggered |

|---|---|---|

| Buyer pain | If fewer than 3 of 8 qualified buyers describe the same recent painful event, stop or redefine the problem. | Kill or narrow problem. |

| Willingness to pay | If no qualified buyer can name current spend, budget owner, or a paid workaround, do not build a paid SaaS yet. | Narrow buyer or kill payment thesis. |

| Distribution | If the founder cannot source 20 qualified prospects without paid ads or vague social posting, change ICP or channel. | Narrow channel or buyer. |

| Competitive wedge | If review mining shows competitors already solve the narrow pain well, choose a different wedge. | Narrow or kill wedge. |

| MVP scope | If the first version requires three or more workflows, enterprise trust, or multiple critical integrations, cut scope. | Narrow MVP or stop. |

| Founder fit | If the idea requires a sales, compliance, or support motion the founder cannot sustain, choose a different version. | Narrow execution shape or kill. |

Stop criteria can also trigger narrowing rather than killing. If 8 interviews show pain in a different buyer segment, that may be a narrow decision. If a channel test fails for the broad buyer but works in one marketplace, that may be a distribution narrowing decision.

Before you open an AI coding tool, write the buyer, assumption, evidence source, threshold, time box, and decision. If your idea is still fuzzy, use the guide to validate your app idea before building with AI and the questions before vibe coding an app before a build prompt turns assumptions into code.

A Worked Example: Shopify Retention SaaS

Bad first version: "AI retention assistant for ecommerce brands."

That version is too broad. It has an unclear buyer, too many workflows, undefined pricing, and no clear channel. It might turn into email automation, analytics, campaign generation, segmentation, churn prediction, reporting, or consulting.

Better narrowed version: "Retention insight assistant for solo Shopify operators with 300-2,000 monthly orders who rely on Klaviyo but do not have a lifecycle marketer."

| Decision layer | Evidence | Verdict | Next action |

|---|---|---|---|

| Buyer clarity | "Ecommerce brands" is broad; solo Shopify operators with 300-2,000 monthly orders is more specific. | Narrow | Interview one store-size segment. |

| Pain urgency | Retention matters, but prove the operator feels it weekly or monthly. | Narrow | Ask about last churn, repeat-purchase, or campaign problem. |

| Current alternative | Klaviyo, Shopify analytics, spreadsheets, agencies, and manual campaign review may already exist. | Keep if confirmed | Map current workflow and complaints. |

| Payment logic | Budget may exist if the operator connects retention to revenue. | Narrow | Test paid audit or manual retention review. |

| Competitive wedge | Broad AI assistant is crowded; "explain retention gaps to non-expert operators" may be a wedge. | Narrow | Review competitor positioning and complaints. |

| Distribution | Shopify operators can be found in app store search, communities, agencies, and content. | Keep if channel test works | Source 20 qualified prospects. |

| MVP scope | Full AI assistant is too broad; manual audit plus one insight workflow is testable. | Narrow | Concierge test before app build. |

| Founder fit | Solo founder can likely test manual workflow and build narrow SaaS later. | Keep | Run a two-week validation sprint. |

Example verdict: Narrow, do not build yet.

The idea is not automatically bad. The broad version should not be built. The next test should prove buyer pain, current workflow, and willingness to pay before coding the product. The strongest first artifact may be a paid manual retention audit, not a SaaS dashboard.

The founder could interview 5-10 matching operators, inspect current retention workflows, compare current email-tool spend, study app-store and review complaints, and offer 3 paid manual audits. If operators cannot describe a recent retention problem, do not recognize missed repeat purchases as costly, or will not pay for the manual version, a polished retention app is premature.

This is where the cluster connects: define the ICP for a SaaS idea, validate SaaS pricing before launch, run SaaS competitor analysis before MVP, use the SaaS idea validation checklist, then decide whether to validate your app idea before building with AI.

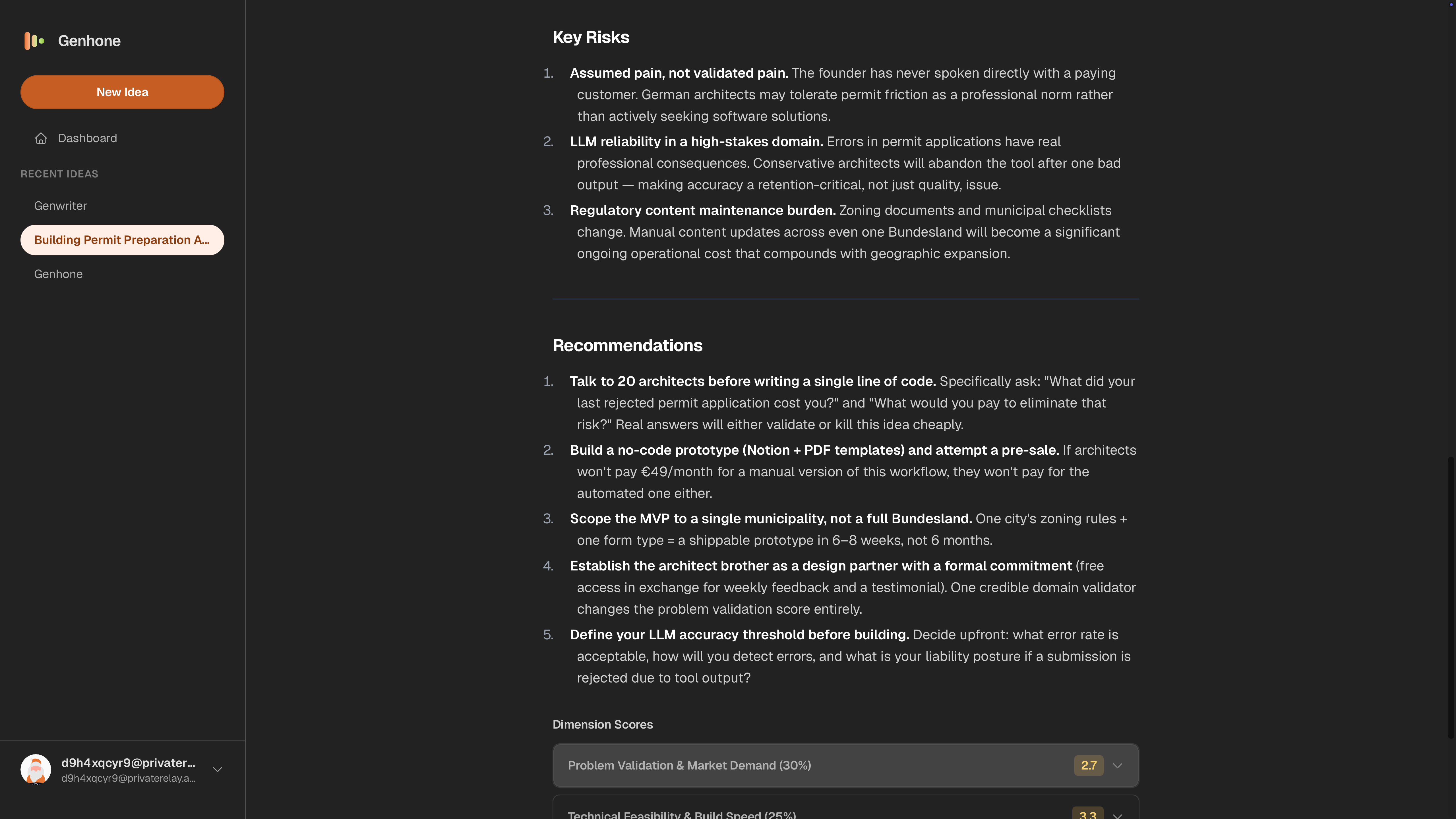

How Genhone Helps With Build, Narrow, or Stop Decisions

Genhone is not a generic idea generator or one-line roast tool. It helps founders structure the assumptions that should exist before evaluation.

Genhone turns the decision into a saved artifact. Instead of leaving your kill criteria in a chat thread or spreadsheet, you refine the idea section by section, get an evaluation across business and founder-fit criteria, and compare it against other ideas before deciding what deserves build time.

The refinement workflow covers Customer Definition, Problem Definition, Solution Mechanics, Value Proposition, Business Model, Go-to-Market Approach, Scope and Boundaries, and Solo Founder Execution. Those sections map directly to the decision layers above.

Genhone also evaluates ideas across automated criteria and founder-fit checks. The useful output is not a guarantee that the verdict is correct. It is a structured record of the assumptions, evidence, risks, and comparison points so you can decide what to kill, narrow, or keep without losing the learning.

Turn a fuzzy SaaS idea into a saved build, narrow, or stop decision before you write code.

Use a structured SaaS idea refinement workflow to preserve the decision and compare it against your other ideas.

Common Mistakes When Deciding Whether to Kill an Idea

The common mistakes are predictable.

One negative conversation is not enough to kill a coherent idea. One positive conversation is not enough to build. No competition is not validation. A waitlist is not willingness to pay unless the signup comes from qualified buyers and leads to payment-related behavior.

Do not average green signals with fatal red signals. A founder can have a good-looking prototype, a clear feature set, and a few encouraging comments while still missing the buyer owner or payment logic.

Narrowing only the tagline is another trap. If the buyer is still "SMBs," the workflow is still broad, and the MVP still needs six integrations, the idea has not narrowed.

Do not pivot because building is hard. Do not persevere because the prototype looks polished. Product momentum is not market evidence.

AI-generated reports can organize assumptions, summarize review sources such as G2, Capterra, Product Hunt, the Shopify App Store, the Chrome Web Store, Reddit communities, Indie Hackers, Meta Ad Library, and Google Trends. They cannot replace direct buyer evidence, payment behavior, switching behavior, or founder-fit judgment.

Keeping too many ideas alive is also expensive. Give each serious idea a decision date, a written threshold, and a saved artifact.

The mistake is not being wrong. The mistake is refusing to name which assumption is wrong.

FAQ

When should you kill a startup idea?

Kill a startup idea when repeated evidence shows that the core thesis is broken: no specific buyer, weak pain, no current alternative or payment behavior, unreachable distribution, impossible MVP scope, broken economics, or poor founder fit. Kill the current thesis, document the learning, and move to a stronger or narrower idea.

What is the difference between killing and pivoting a startup idea?

Killing stops the current thesis because the foundation is broken. Pivoting changes one important part of the idea, such as buyer, problem, solution, pricing, channel, or scope, while preserving evidence that something real remains. For pre-build SaaS ideas, "narrow" is often the more precise word than pivot.

How do I know if I should narrow a SaaS idea instead of killing it?

Narrow when one signal is real but the framing is wrong. Examples: pain exists but the buyer is too broad, buyers complain but the package is wrong, competitors prove demand but the wedge is unclear, or the MVP is too large. Narrowing should create a smaller testable claim.

Should I kill an idea if there are too many competitors?

Not automatically. Competitors can prove demand. Kill only if competitors solve the narrow buyer's problem well enough, the wedge is unclear, switching is unlikely, or the market is too expensive to enter. If reviews show repeated complaints from a specific buyer, narrow around that gap.

Should I kill an idea if there are no competitors?

No competitors can mean hidden opportunity, but it can also mean no budget, weak pain, poor timing, or a non-software workaround. Look for substitutes, manual workflows, current spend, and buyer complaints before treating novelty as validation.

How many customer interviews are enough before killing an idea?

For a narrow pre-build SaaS idea, five to ten qualified interviews can reveal directional patterns, especially if the same pain and workaround do not repeat. The number matters less than interview quality, buyer specificity, and whether behavior supports the claim.

What evidence is stronger than someone saying they like my idea?

Stronger evidence includes current spend, manual workarounds, repeated painful events, competitor complaints from the same ICP, a paid pilot, preorder, serious budget discussion, or a buyer taking a payment-related action. Compliments and generic waitlist signups are weak.

How do I write kill criteria before building an MVP?

Write kill criteria as specific thresholds tied to assumptions. Example: "If fewer than 3 of 8 qualified Shopify operators describe the same retention workflow pain, we will not build this version." Define the buyer, evidence source, threshold, time box, and decision before coding starts.

Can AI help decide whether to kill a startup idea?

AI can organize assumptions, summarize research, generate interview questions, and compare signals. It cannot prove buyer urgency, willingness to pay, switching behavior, or founder fit by itself. Treat AI output as a hypothesis until direct evidence supports it.

QA Note

- Drafter pass followed the brief's H1, H2/H3 order, target query, metadata, required assets, FAQ prompts, author details, source expectations, and internal-link plan.

- Keyword placement: primary query or close variant appears in the title tag, slug, answer block, opening, kill section, and FAQ.

- Internal links included: validation pillar, checklist, ICP, pricing, competitor analysis, AI validation, vibe-coding questions, and homepage CTA.

- Source placement: behavior-level research sources are placed in the mistakes section where AI-assisted research limits are discussed; direct competitor/product-led SERP results are not cited as authority.

- Assumption: final CMS implementation will parse author, reviewed date, Article schema, FAQPage schema, and HowTo schema from frontmatter.

- Publication note: add the Genhone screenshot at

/blog/when-to-kill-a-startup-idea/genhone-screenshot.pngor replace the image before release.