ChatGPT Prompts Are Not a Startup Validation Process

How to use ChatGPT to structure your startup assumptions without mistaking AI answers for evidence.

ChatGPT can help with startup idea validation by structuring assumptions, drafting interview questions, summarizing desk research, and identifying gaps. It cannot validate demand by itself because validation requires evidence from real buyer behavior: conversations, replies, waitlists, usage, pricing signals, payments, or clear objections. Treat ChatGPT as a research assistant, not the source of the go/no-go decision.

If you are searching for ChatGPT startup idea validation, the trap is asking the model for a verdict. ChatGPT can make an idea sound sharper in minutes. It cannot tell you whether a reachable buyer will change behavior, switch tools, join a qualified waitlist, or pay.

After building with LLM-powered workflows early, the core lesson is clear: ChatGPT can make startup thinking faster, but it does not turn unsupported assumptions into validation evidence.

Quick Answer: Can ChatGPT Validate a Startup Idea?

ChatGPT can assist startup validation. It cannot validate demand alone.

The difference is evidence. AI-generated confidence is a fluent explanation of why an idea might work. Validation evidence is something that happened outside the chat: a buyer described the last time the workflow broke, replied to targeted outreach, joined a qualified waitlist from relevant traffic, used a manual prototype, discussed budget, paid a deposit, or gave a clear objection.

A strong ChatGPT response can still be based on broad assumptions, incomplete context, stale market knowledge, hallucinated facts, or a weak prompt. Longer prompts and newer models improve structure, but they do not create buyer urgency.

Use ChatGPT for the parts it is good at: mapping assumptions, generating better discovery questions, organizing desk research, summarizing source-labeled notes, and exposing gaps. Then use real buyer behavior to decide whether to build, narrow, or stop.

For the broader decision framework, use the guide to validating a SaaS idea before building.

What ChatGPT Is Good At During Startup Validation

ChatGPT is most useful before and after evidence collection. Before evidence, it helps turn a vague idea into assumptions you can test. After evidence, it helps organize notes and spot contradictions. In the middle, the founder has to leave the chat.

Give ChatGPT narrow context: the buyer, workflow, current alternative, pricing hypothesis, channel, and what you already know. Then verify every market, competitor, pricing, or demand claim against current sources and real prospects. Strong prompt craft improves the shape of the thinking. It does not prove the thinking is true.

| Use ChatGPT for | Useful output | How to verify it |

|---|---|---|

| Assumption mapping | Buyer, pain, alternative, pricing, channel, and scope assumptions. | Compare against interviews, communities, competitor pages, reviews, and search data. |

| Interview planning | Better discovery questions and follow-up probes. | Use with a specific buyer and capture last-time stories, not opinions. |

| Desk research organization | Lists of competitors, categories, objections, and terms to investigate. | Check primary sources, current search results, product pages, communities, and review sites. |

| ICP hypotheses | Possible first-buyer segments and trigger moments. | Validate reachability and pain consistency with real prospects. |

| Pricing hypotheses | Possible value metrics, packages, and willingness-to-pay questions. | Test through current spend, paid pilots, preorders, or pricing conversations. |

| Synthesis | Cleaner summaries of notes, contradictions, and unresolved questions. | Preserve source notes and separate facts from interpretation. |

If the founder is about to turn the idea into an AI build, start with validating an app idea before building with AI.

What ChatGPT Cannot Validate for You

ChatGPT can produce plausible strategy language without proof. That is useful for hypothesis generation, but dangerous when the output sounds like a decision.

Startup validation is about observed behavior and decision thresholds. A prompt can create a hypothesis. It cannot create buyer urgency, budget ownership, switching pain, or a reachable channel. A better model or longer prompt still cannot replace missing external evidence.

Build More Better makes the same practical distinction in its guide to ChatGPT startup ideas: ChatGPT can sharpen an idea, but demand needs behavior outside the chat.

| ChatGPT answer | Why it feels convincing | What is still unproven | Evidence needed |

|---|---|---|---|

| "This solves a real problem." | The pain sounds plausible and common. | Whether a specific buyer feels it urgently. | Focused buyer conversations and last-time workflow stories. |

| "There is market demand." | ChatGPT can name trends, categories, and adjacent products. | Whether your wedge has reachable demand. | Search data, community signals, competitor review mining, and channel tests. |

| "People would pay for this." | The value proposition sounds rational. | Budget ownership, price tolerance, and switching behavior. | Current spend, pricing conversations, deposits, paid pilots, or fake-door tests. |

| "There are competitors, but you can differentiate." | The positioning seems neatly framed. | Whether buyers care about that difference. | Competitor analysis plus buyer objections and switching triggers. |

| "Build an MVP with these features." | The plan feels actionable. | Whether those features test the riskiest assumption. | MVP boundary tied to one buyer, one workflow, and one validation goal. |

| "This is a strong idea." | The answer gives a verdict. | Whether the verdict rests on verified evidence. | A scored decision artifact with cited evidence and stop criteria. |

The warning is not "never use ChatGPT." The warning is narrower: do not treat a confident response as market evidence.

The ChatGPT-Assisted Startup Validation Process

Do not ask ChatGPT to validate the idea. Ask it to help you build a validation plan, then judge the idea from evidence gathered outside the chat.

YC's startup advice emphasizes launching, talking to users, and iterating from customer feedback. Paul Graham's "Do Things That Don't Scale" makes the same early-stage point from another angle: founders often need to recruit and learn from users manually before a process can scale.

Use ChatGPT to make that work sharper, not to skip it.

| Step | What to ask ChatGPT | What the founder must verify | Output |

|---|---|---|---|

| 1. State the raw idea | Ask ChatGPT to restate the idea as buyer, painful workflow, current alternative, and promised outcome. | Whether the buyer and workflow are specific enough to investigate. | Clean assumption map. |

| 2. Identify risky assumptions | Ask which assumptions would kill the idea if false. | Whether ChatGPT missed pricing, channel, trust, scope, or founder-fit risks. | Ranked risk list. |

| 3. Generate evidence questions | Ask for interview questions, desk-research queries, and behavioral tests for each risk. | Whether questions ask for past behavior instead of opinions. | Validation task list. |

| 4. Collect evidence outside ChatGPT | Use ChatGPT only to organize outreach scripts or research templates. | Buyer conversations, replies, current alternatives, pricing signals, channel response, competitor evidence. | Source notes and evidence snippets. |

| 5. Synthesize with source labels | Paste notes into ChatGPT and ask it to separate facts, quotes, patterns, objections, and assumptions. | Whether the synthesis preserves nuance and does not overstate small samples. | Evidence summary. |

| 6. Decide build, narrow, or stop | Ask ChatGPT to argue each verdict, then compare against written thresholds. | Founder judgment, evidence strength, founder fit, and opportunity cost against other ideas. | Decision artifact. |

Use source labels when you bring notes back into ChatGPT: interview, community, competitor, pricing, channel, payment, and usage. Then ask the model to separate evidence from interpretation. "Three support leads described this workflow last week" is evidence. "This seems like a big market" is interpretation.

The output of each step should be an artifact, not only a chat transcript. Keep the assumption map, risk list, task list, source notes, evidence summary, and decision artifact somewhere you can compare later. A narrow next test is often better than a build, especially when the evidence points to a smaller buyer, simpler workflow, or different price.

If you want a shorter worksheet, use the SaaS idea validation checklist.

Turn a ChatGPT brainstorm into a structured startup validation artifact before you build.

Prompt Pack: Use These to Find Gaps, Not to Get a Verdict

These prompts are not validation. They are prompts for finding the next evidence task.

Use them to force unknowns, design tests, and challenge weak evidence. Do not paste confidential customer details unless you have permission and a clear reason to process them. When you paste notes, label the source so ChatGPT can distinguish interviews from guesses.

Assumption map:

Act as a skeptical startup validation partner. Restate this idea as: first buyer, painful recurring workflow, trigger moment, current alternative, promised outcome, pricing assumption, first distribution path, MVP boundary, and stop criteria. Mark any vague answer as "unknown" instead of filling it in.

Idea: [paste idea]

Use this when the idea is still fuzzy. The most useful output is not a polished paragraph. It is a list of unknowns.

Riskiest assumptions:

Given the assumption map below, list the 7 assumptions most likely to kill this startup idea if false. For each one, explain why it matters, what weak evidence would look like, what strong evidence would look like, and the cheapest test to run this week.

Assumption map: [paste map]

Use this before interviews, landing pages, or prototypes. The goal is to choose the test that can change your mind fastest.

Interview questions:

Turn these risks into customer discovery questions. Use questions about recent behavior, current workarounds, budget, switching, and urgency. Avoid leading questions and avoid asking whether the person likes the idea.

Risks: [paste risks]

Target buyer: [specific buyer]

Use this to avoid opinion questions. "Would you use this?" is weak. "Walk me through the last time this happened" is stronger.

Evidence synthesis:

Summarize these validation notes into four sections: confirmed facts, direct quotes or objections, unresolved assumptions, and recommended next test. Do not make a build recommendation unless the notes include buyer behavior, pricing evidence, or channel response.

Notes: [paste notes with source labels]

Use this after collecting evidence. Require ChatGPT to preserve contradictions instead of smoothing them into a confident story.

Decision challenge:

Argue the case for Build, Narrow, and Stop using only the evidence below. For each verdict, cite the strongest supporting evidence, the biggest missing evidence, and the next action. If evidence is thin, say so plainly.

Evidence: [paste evidence summary]

Use this only after external evidence exists. ChatGPT can help challenge your interpretation. It should not decide without buyer behavior, pricing evidence, or channel response.

Evidence Ladder: From ChatGPT Guess to Startup Signal

ChatGPT belongs at the speculation and synthesis layers unless it is summarizing source-labeled evidence. Desk research is useful, especially when it includes current public sources such as search results, Google Trends, Reddit, Indie Hackers, Hacker News, competitor pages, app stores, and review sites. But desk research is not a buyer commitment.

| Evidence level | Examples | What it supports | What it cannot prove | Next action |

|---|---|---|---|---|

| AI speculation | ChatGPT brainstorm, AI score, generated market summary, friend enthusiasm. | Idea capture, assumption mapping, hypothesis generation. | Demand, willingness to pay, switching, retention, or channel access. | Write the assumption map and choose one risk to test. |

| Desk research | Search results, Google Trends, competitor pages, Reddit threads, Indie Hackers, Hacker News, reviews. | Language, alternatives, possible demand pockets, competitor gaps. | That your chosen buyer will pay or switch. | Pick one buyer and collect direct evidence. |

| Conversation evidence | 5-10 focused interviews, last-time stories, workflow walkthroughs, objections. | Pain specificity, current behavior, urgency, buyer language. | Payment intent unless money, budget, or procurement enters the conversation. | Narrow buyer, workflow, and MVP boundary. |

| Behavioral evidence | Qualified replies, waitlist from target traffic, repeated workaround, manual service interest, channel response. | Seriousness, reachability, and next-test direction. | Repeatable acquisition or long-term retention. | Test pricing or build the smallest workflow slice. |

| Payment evidence | Deposit, paid pilot, preorder, fake-door checkout, signed LOI with budget owner. | Willingness to pay and urgency. | Product-market fit or scalable growth. | Build or manually deliver the narrow solution. |

| Usage evidence | Repeated use, retention, users requesting same outcome, paid use after trial. | Solution direction and retention hypotheses. | Full business viability without pricing and channel evidence. | Continue, narrow, price, or stop. |

Payment evidence is strong, but not every early idea needs payment before a tiny test. The cheaper the build, the smaller the validation test can be. The evidence still cannot be imaginary.

If the buyer is vague, define the first buyer for a SaaS idea. If payment logic is weak, validate SaaS pricing before launch. If the alternative landscape is fuzzy, do competitor analysis before building an MVP.

Example: A ChatGPT Answer Becomes a Validation Plan

Rough idea:

A SaaS that uses AI to summarize weekly customer support themes for small B2B SaaS teams.

A ChatGPT-style answer would probably be encouraging. It might say support teams need better visibility, AI can save time, competitors leave gaps, and an MVP dashboard could summarize themes from Zendesk or Intercom.

That is plausible. It is not enough to build.

| Field | ChatGPT-style first answer | Stronger validation plan |

|---|---|---|

| Buyer | "Customer support teams." | Support leads or solo founders at 5-30 person B2B SaaS companies handling 200-2,000 monthly tickets without a dedicated insights analyst. |

| Pain | "They need to understand customer issues." | Weekly support themes get buried across Intercom/Zendesk conversations, making product prioritization and churn-risk patterns slow to spot. |

| Current alternative | "Manual review." | Support tags, helpdesk reports, spreadsheets, Slack summaries, product calls, and ad-hoc exports. |

| Pricing logic | "They would pay to save time." | Check whether teams already pay for helpdesk analytics, AI support tools, or product feedback tools; test $49-$199/month depending on ticket volume and saved ops time. |

| First evidence test | "Build an MVP dashboard." | Interview 8 support leads, ask for last week's reporting workflow, request anonymized export examples, and run a manual summary for 2 teams before coding. |

| MVP boundary | "AI dashboard with integrations and charts." | One workflow: upload CSV export, generate weekly top themes, quote examples, and suggested product follow-ups. No live integrations or auto-actions yet. |

| Stop criteria | None. | Stop or narrow if teams already trust existing helpdesk reports, cannot share data, do not review themes weekly, or will not pay for insight summaries. |

| Verdict | "Promising idea." | Narrow: plausible pain, but buyer urgency, data-access friction, and pricing must be validated before building. |

ChatGPT helps by turning the rough idea into a clearer map. The founder still needs evidence from support leads, current workflows, pricing conversations, and willingness to share data.

The next action is not implementation. Do not build live helpdesk integrations, admin dashboards, account roles, automated product-roadmap scoring, or advanced analytics yet. First, run the manual summary for two teams and learn whether the output changes a real weekly decision.

If the answer is yes, build the smallest workflow slice. If the answer is mixed, narrow the buyer, ticket volume, helpdesk source, or reporting use case.

Build, Narrow, or Stop After ChatGPT

A confident ChatGPT answer should never override weak evidence. The useful decision is build, narrow, or stop.

"Narrow" is often the best early outcome. It means part of the signal is real, but the buyer, workflow, channel, price, trust barrier, or MVP boundary needs to be sharper before code.

| Verdict | Use when | What to do next |

|---|---|---|

| Build | ChatGPT helped structure the idea, and external evidence supports the buyer, pain, current alternative, payment logic, first channel, MVP boundary, and founder fit. | Build only the narrow slice that tests the riskiest remaining assumption. |

| Narrow | ChatGPT produced a plausible plan, but evidence is thin, mixed, broad, or concentrated in one weak area. | Rewrite one part of the assumption map and run one focused evidence test. |

| Stop | The idea depends mostly on AI speculation, weak pain, unreachable buyers, no pricing logic, good-enough alternatives, or poor founder fit. | Save the artifact, keep the learning, and compare against a stronger idea. |

Build does not mean "build the imagined product." It means build the smallest test that can reduce the riskiest remaining uncertainty.

Idea comparison matters because ChatGPT makes many ideas sound plausible. A saved artifact lets you compare evidence quality instead of comparing how exciting two prompts felt.

If the evidence is mixed, use the guide on when to kill, narrow, or keep a startup idea.

Turn a ChatGPT brainstorm into a structured startup validation artifact before you build.

How Genhone Fits After the ChatGPT Brainstorm

Genhone is for the moment after a ChatGPT session creates more ideas, more uncertainty, or a plausible but unverified verdict. It helps solo founders move from unstructured brainstorming into a structured idea validation workflow and guided SaaS idea refinement.

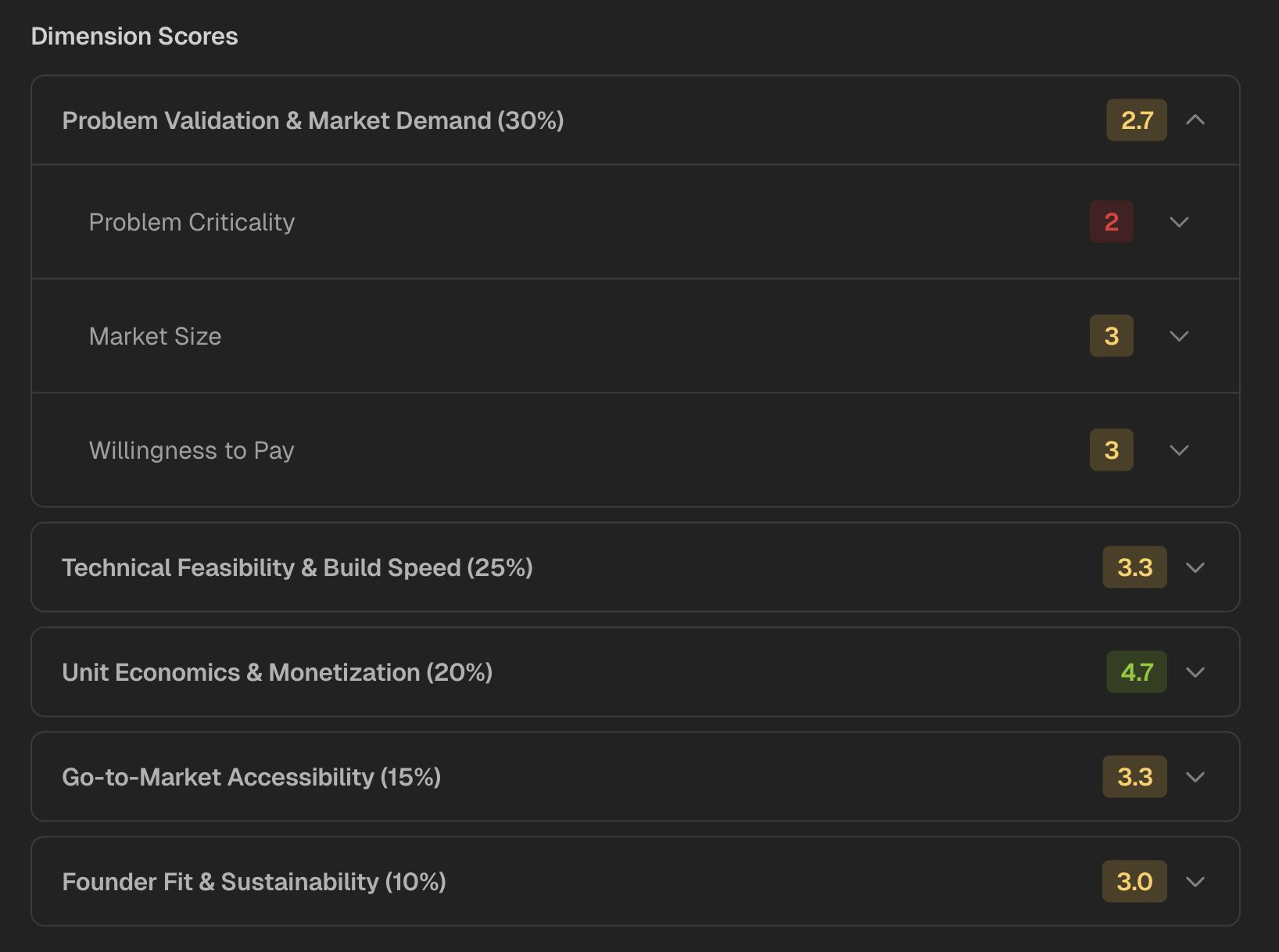

Unlike a one-off prompt, Genhone enforces 12 refinement sections so uncomfortable questions do not get skipped: customer, problem, business model, go-to-market, technical foundation, founder fit, and more. It evaluates ideas across 18 criteria, including automated criteria from the idea content and founder-fit checks gathered through a separate chat.

The goal is not to prove a startup will work. Genhone saves scores and criteria-level reasoning as persistent artifacts so founders can compare ideas side by side, spot weak assumptions, and decide which idea deserves the next validation test.

If you are already close to opening an AI coding tool, answer the questions before vibe coding an app and review the broader guide to vibe coding startup validation. For the fuller AI-build preparation process, use the guide to validate your app idea before building with AI.

FAQ

Can ChatGPT validate a startup idea?

ChatGPT can help structure assumptions, draft research plans, summarize notes, and identify gaps. It cannot validate demand by itself because validation requires real buyer behavior, pricing signals, channel response, usage, payment, or objections.

What is the best ChatGPT prompt for startup idea validation?

The best prompt asks ChatGPT to map assumptions, mark unknowns, identify risky assumptions, and propose evidence tests. It should not ask ChatGPT for a final verdict without external evidence.

Is ChatGPT better than a startup idea validation tool?

ChatGPT is flexible for brainstorming and synthesis, but it does not enforce a process, save comparable artifacts, or verify evidence by itself. A structured validation workflow or tool is better when the founder needs repeatable decisions across multiple ideas.

What evidence do I need beyond ChatGPT?

Useful evidence includes buyer interviews, current-workflow examples, competitor and review research, qualified replies, waitlists from target traffic, pricing conversations, deposits, paid pilots, and early usage behavior.

Can I use ChatGPT instead of talking to customers?

No. ChatGPT can prepare better customer conversations, but it cannot replace them. Customer conversations reveal current behavior, urgency, objections, budget context, and buyer language that a model cannot prove from the prompt alone.

Should I build an MVP after ChatGPT says the idea is strong?

Only if external evidence supports the riskiest assumptions and the MVP is narrow enough to test one business question. If evidence is thin, narrow the idea or run a smaller validation test first.

How do I avoid hallucinated startup validation from ChatGPT?

Force ChatGPT to label unknowns, cite source notes, separate facts from assumptions, and avoid build recommendations unless the input includes buyer behavior, pricing evidence, or channel response. Verify market, competitor, and pricing claims against current sources.