Vibe Coding Your Startup? Validate the Business First

A business-first validation artifact for founders using Cursor, Lovable, Bolt, v0, Replit, Claude Code, Codex, or similar AI coding tools.

Vibe coding startup validation means proving the business case before using AI to build: define a specific buyer, painful recurring workflow, current alternatives, willingness to pay, first distribution path, MVP boundary, founder fit, and stop criteria. The goal is a build, narrow, or stop decision before the PRD, product spec, or first AI coding prompt.

After shipping multiple SaaS or software products and building with LLM-powered workflows early, I have learned that the first AI build usually feels cheaper than the assumptions behind it. AI makes product surface area easier to create; it does not make buyer, pricing, distribution, or founder-fit mistakes cheaper.

Quick Answer: Vibe Coding Startup Validation Means Business Before Build

Vibe coding startup validation is the decision work you do before a PRD, first build prompt, generated code, or weekend MVP. It checks whether a reachable buyer has a painful recurring workflow, a reason to pay or switch, a plausible path to discovery, and a narrow enough first product to test the core business assumption.

A working app validates implementation. It does not prove buyer urgency, willingness to pay, distribution access, or founder fit. That distinction matters when AI coding tools can turn a vague idea into polished screens, database tables, integrations, and deployment tasks before the startup thesis is clear.

The useful output is not a confidence score. It is one of three verdicts:

| Verdict | Meaning |

|---|---|

| Build | The evidence supports a narrow test of one core business assumption. |

| Narrow | Some signal exists, but buyer, pain, pricing, distribution, trust, or scope is still fuzzy. |

| Stop | The current thesis is too weak, broad, unreachable, expensive, or mismatched for the founder. |

This page is about business readiness. It is not a prompt-writing guide, deployment checklist, security review, or critique of AI-generated code.

If you want the shorter pre-prompt checklist, start with the questions to answer before vibe coding an app.

Why Fast AI Builds Make Business Validation More Important

AI coding tools compress the distance between idea and product surface area. A founder can now create a dashboard, auth flow, landing page, onboarding path, and first integration before the buyer has been defined clearly.

That speed is useful. It also creates a trap: visual progress can make weak assumptions feel more real than they are. Domain purchases, tool subscriptions, templates, hosting, API usage, integrations, support burden, and maintenance still create sunk cost. The risk moves from "Can I build it?" to "Is this the right thing to build for a reachable buyer?"

The market context makes that question sharper. In a May 1, 2026 Business Insider interview, Antler Asia's Jussi Salovaara described new vibe-coding startups as a crowded category and emphasized domain expertise as a differentiator. Treat that as context, not methodology: the point is not that founders should stop using AI tools. The point is that fast building raises the bar for business clarity.

If the app is meant to become a SaaS business, use the broader framework for validating a SaaS idea before building.

The Business-First Validation Artifact

A business-first validation artifact is the short decision record you complete before a PRD or AI build prompt. It captures the buyer, painful workflow, current alternatives, pricing logic, distribution path, MVP boundary, founder fit, evidence level, and stop criteria so the first build tests a business assumption instead of a vague product idea.

It is not a full business plan. It is not a guarantee that the startup will work. It is a practical artifact that gives you enough clarity to choose the next evidence-gathering step.

Validation should mean evidence plus decision, not research alone.

| Artifact field | Decision it answers | Weak answer | Stronger answer | Evidence standard before building |

|---|---|---|---|---|

| First buyer | Who owns the pain and can say yes? | "Founders," "creators," or "small businesses." | A role, context, and trigger moment; user and buyer separated if needed. | Named reachable prospects, 5-10 focused conversations, or clear community/search evidence from one buyer type. |

| Painful workflow | What repeats often enough to support a business? | "It would be useful." | A recurring workflow tied to time, money, risk, revenue, compliance, or stress. | Last-time stories, workflow walkthroughs, screenshots, or repeated complaints from the target buyer. |

| Current alternative | What do they use or tolerate today? | "Nothing exists." | Software, spreadsheets, manual work, agencies, scripts, employees, or deliberate inaction with a reason. | Competitor/review scan, buyer interviews, or observed current workflow. |

| Payment or switching logic | Why would they pay or switch? | "It saves time." | Current spend, budget owner, paid pilot, preorder, avoided cost, revenue upside, or switching trigger. | Pricing conversation, current spend proof, fake-door checkout, paid manual offer, or serious procurement signal. |

| First distribution path | Where will the first 20 users come from? | "Product Hunt," "social media," or "ads later." | A reachable list, search query, community, marketplace, partner, founder network, or outbound segment. | A real first-user sourcing plan and at least one tested channel signal. |

| MVP boundary | What should the first build test? | Full dashboard, AI chat, integrations, analytics, and admin features. | One buyer, one workflow, one outcome, with explicit non-goals. | Scope boundary plus evidence that the slice tests the riskiest assumption. |

| Founder fit | Why are you suited to test this wedge? | "I can vibe code it." | Domain access, lived pain, distribution edge, technical edge, or unusual learning speed. | Honest founder-fit note tied to the buyer, channel, or workflow. |

| Stop criteria | What would make you stop or narrow? | No written rule. | Specific invalidation conditions for buyer, pain, pricing, channel, scope, or founder fit. | Written thresholds before the prototype exists. |

The artifact should be short enough to guide the first PRD or prompt. If it becomes long, the idea is probably trying to serve too many buyers or workflows at once.

For the fuller AI-assisted building process, use the guide to validating an app idea before building with AI.

Turn your vibe-coded startup idea into a structured validation artifact before you write the PRD or first build prompt.

What Evidence Is Strong Enough When the Build Is Cheap?

When building is cheap, the validation bar should be smaller and sharper, not imaginary. You do not need enterprise-grade proof before a weekend MVP, but you do need enough evidence to know which assumption the weekend MVP is testing.

AI-generated scores and market estimates belong in speculation or desk research unless they are backed by live data and buyer behavior. A 30-second scan can help you avoid obvious crowded-app mistakes, but it should not decide the whole startup. A PRD can organize the build, but a PRD cannot create buyer evidence.

| Evidence level | Signal | What it can support | What it cannot prove | Next action |

|---|---|---|---|---|

| Speculation | Founder intuition, AI brainstorm, broad trend, friend enthusiasm. | Idea capture and hypothesis generation. | Buyer urgency, payment, switching, or distribution. | Write the artifact and identify missing evidence. |

| Desk research | Competitor pages, reviews, Reddit threads, app-store scans, search results, public pricing. | Current alternatives, market language, possible wedges. | That your chosen buyer will pay or switch. | Choose one buyer/workflow and run buyer evidence tests. |

| Conversation evidence | 5-10 focused buyer conversations and last-time stories. | Pain, workflow, current behavior, language, objections. | Payment intent unless money or budget enters the conversation. | Narrow buyer, workflow, and MVP boundary. |

| Behavioral evidence | Current spend, manual work, repeated workaround, qualified waitlist, channel response. | Demand seriousness and channel plausibility. | Long-term retention or scalable acquisition. | Build or simulate the smallest testable slice. |

| Payment evidence | Paid pilot, preorder, deposit, fake-door checkout from qualified traffic, serious procurement step. | Pricing plausibility and urgency. | Product-market fit or repeatable growth. | Build the narrow MVP or paid manual test. |

| Usage evidence | Real users use the MVP repeatedly and ask for the same outcome. | Solution direction and retention hypotheses. | Business viability without pricing and channel evidence. | Continue learning, price, narrow, or stop. |

Y Combinator's startup advice still ties building to talking with users and learning from real customer feedback. Paul Graham's "Do Things That Don't Scale" makes the early manual-learning point even more directly: founders often need to recruit and learn from early users one by one.

The correct threshold depends on build cost, risk, buyer access, and reversibility. Payment evidence is strongest, but not every early idea needs payment before the first tiny test. If the idea is reversible and low-risk, conversation plus desk research may justify a narrow prototype. If it touches sensitive data, compliance, procurement, payments, or trust, the bar should be higher.

If the buyer is still vague, define the first buyer for a SaaS idea. If payment logic is weak, validate SaaS pricing before launch. If the status quo is unclear, study current alternatives before building an MVP.

PRDs, Prompts, Scores, and Market Scans Are Downstream

PRDs, prompts, AI scores, and market scans are useful. They are just not the first business decision.

A PRD can translate a narrowed startup thesis into requirements. A prompt can turn a scoped test into implementation. A quick scan can reveal competitors and obvious risks. A score can expose assumptions. None of them proves that a buyer is reachable, urgent, willing to pay, and suited to the founder's wedge.

| Artifact or tool | Best used for | Wrong use | Business-first question it depends on |

|---|---|---|---|

| Raw vibe-coded idea | Capturing the initial product instinct. | Treating excitement as evidence. | Who has the painful workflow and why now? |

| AI score or quick scan | Finding obvious risks, competitors, and research directions. | Outsourcing the go/no-go decision to a one-shot score. | Which evidence does the score rely on, and what is still unproven? |

| Market scan | Mapping categories, competitors, keywords, and caution signals. | Assuming market data proves your buyer will switch. | Which buyer segment is underserved and reachable? |

| PRD | Translating a narrowed business decision into product requirements. | Making an unvalidated idea look rigorous. | What validated assumption should this product spec test? |

| AI build prompt | Implementing or planning the smallest slice. | Asking AI to invent product strategy while writing code. | What buyer, workflow, non-goals, and stop criteria constrain the prompt? |

| Business validation artifact | Deciding whether and what to build. | Pretending uncertainty is gone. | What evidence supports build, narrow, or stop? |

This sequence matters because AI can make weak strategy look organized. It can draft interview questions, summarize public research, identify missing assumptions, or turn the artifact into a scoped PRD. But the founder owns the business decision.

The first prompt should inherit the business artifact. It should not replace it.

Example: From Vibe-Coded App Idea to Business Decision

Rough idea: an AI app that helps small accounting firms chase missing client documents before tax deadlines.

That sounds immediately buildable. AI could generate a portal, reminder engine, client dashboard, admin interface, upload flow, and automated message drafts. But those are implementation choices. The business question comes first: do small firms have painful enough document-chasing workflows, do they trust a new product in this area, and would they pay for a narrow version?

| Artifact field | Weak first answer | Stronger answer after one validation pass |

|---|---|---|

| First buyer | "Accountants." | Owners or operations managers at 2-15 person tax/accounting firms handling 80-400 recurring small-business clients before filing deadlines. |

| Painful workflow | "Clients forget documents." | Staff repeatedly chase bank statements, payroll reports, receipts, and entity docs across email threads during deadline weeks. |

| Current alternative | "Email." | Email templates, spreadsheets, client portals, practice-management tools, admin follow-up, and last-minute phone calls. |

| Payment/switching logic | "They save time." | Firms already pay for practice-management software or admin time; missed docs create overtime, delayed filings, and client frustration. |

| First distribution path | "LinkedIn." | Direct outreach to small firm owners in accounting communities plus interviews through two local accountant referrals. |

| MVP boundary | "AI client portal." | One workflow: upload a client list, generate missing-document reminders, track status, and draft follow-up messages for staff approval. |

| Founder fit | "I can build it with AI." | Founder has access to two accountants for workflow review but lacks tax-domain depth; needs closer SME validation before building compliance-sensitive features. |

| Stop criteria | None. | Stop or narrow if firms already rely on portals that solve follow-up, if document handling requires trust/compliance beyond founder capacity, or if owners will not pay for reminder automation. |

| Verdict | Build the app. | Narrow: pain seems plausible, but compliance/trust, channel access, and willingness to pay need one focused test before code. |

The stronger answer is still not proof of success. It is proof that the idea has become testable.

The next step should not be a full portal. It should be 5-8 focused accountant conversations, current workflow walkthroughs, and either a manual reminder-service test or a fake-door pricing test. The founder should learn whether firms already have this solved, whether staff would trust an external workflow, whether owners would pay, and whether the narrow reminder workflow is valuable without storing sensitive documents.

The first AI prompt should ask for missing assumptions and a scoped test plan before implementation. AI should not build the full portal, payment handling, sensitive document storage, multi-firm admin, integrations, or automatic client messaging without review.

Build, Narrow, or Stop Before You Vibe Code

The artifact exists to force a decision before momentum starts.

| Verdict | Use when | Next action |

|---|---|---|

| Build | Buyer, painful workflow, current alternative, payment/switching logic, first channel, MVP boundary, founder fit, and stop criteria are specific and supported by evidence. | Prompt AI to plan or build only the narrow slice that tests the core business assumption. |

| Narrow | Pain exists, but buyer, pricing, distribution, scope, trust, or founder fit is fuzzy. | Change one part of the artifact and run one focused validation test. |

| Stop | Buyer is broad, pain is weak, no one pays or switches, buyers are unreachable, alternatives are good enough, scope cannot be narrowed, or founder fit is poor. | Save the learning, compare against another idea, or restart from a different problem. |

"Stop" is not failure. It is how you avoid turning weak assumptions into maintenance work. "Narrow" should be the most common early outcome because most ideas contain some signal and some confusion. "Build" means build the test, not the whole imagined startup.

Idea comparison matters when a vibe-coding founder has many plausible ideas and limited attention. The question is not whether one idea can become a weekend app. The question is which idea deserves the next serious learning cycle.

If your evidence is mixed, use the guide on when to kill, narrow, or keep a startup idea.

Turn your vibe-coded startup idea into a structured validation artifact before you write the PRD or first build prompt.

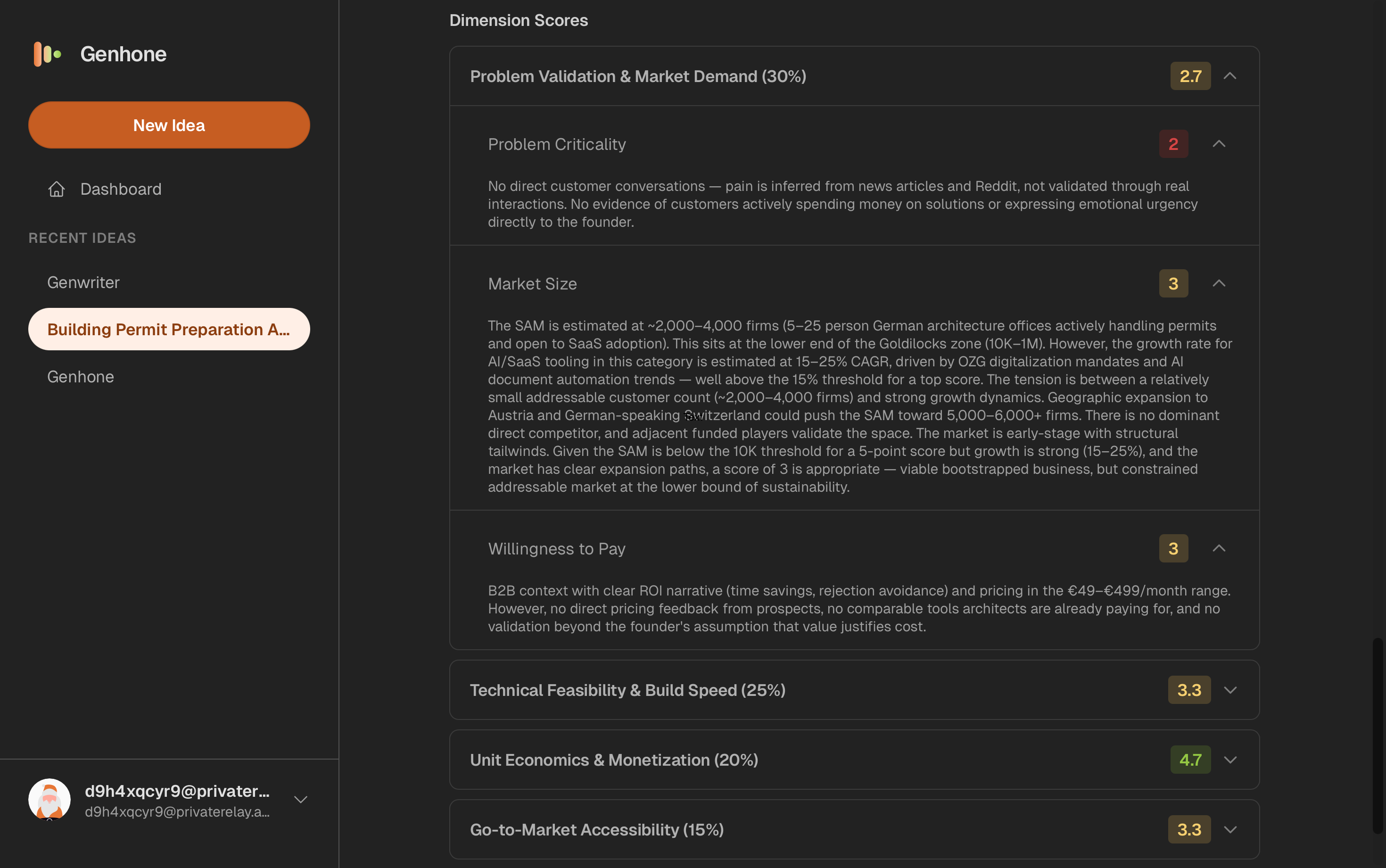

How Genhone Fits Before the PRD or First AI Build Prompt

Genhone helps solo founders turn rough SaaS ideas into structured refinement artifacts before coding. It is useful when your business-first artifact contains yellow or red answers: broad buyer, fuzzy pain, unclear pricing, weak distribution, overloaded MVP scope, or founder-fit uncertainty.

The structured idea validation workflow guides founders through 12 enforced sections, including customer, problem, solution mechanics, value proposition, business model, go-to-market, scope, metrics, and solo-founder execution. Genhone then evaluates ideas across 18 criteria, including automated criteria and founder-fit checks. Scores and reasoning are saved as persistent artifacts, so ideas can be compared side by side instead of lost in separate chat threads.

That does not prove a startup will work. It gives the founder a clearer artifact, stronger questions, and a better basis for deciding whether to build, narrow, or stop.

If you want a lighter worksheet, use the SaaS idea validation checklist. If you want deeper guided refinement artifacts, use Genhone before the PRD or first AI build prompt.

FAQ

What is vibe coding startup validation?

Vibe coding startup validation is the process of checking the buyer, pain, alternatives, pricing, distribution, MVP boundary, founder fit, and stop criteria before using AI to build the startup. It produces a build, narrow, or stop decision.

Should I validate before writing a PRD for a vibe-coded app?

Yes. Write the business validation artifact first, then use it to constrain the PRD. A PRD specifies what to build; it does not prove the app deserves to become a startup.

Is building a fast MVP enough validation?

Not by itself. A fast MVP can test a specific assumption, but only if the buyer, workflow, channel, and evidence standard are clear before the build.

Can ChatGPT or an AI score validate my startup idea?

AI can structure assumptions, summarize research, identify competitors, draft interview questions, and expose gaps. It cannot prove buyer urgency, willingness to pay, or distribution access without real-world evidence.

How much validation is enough before vibe coding a startup?

Enough to define one buyer, one repeated painful workflow, current alternatives, a plausible payment or switching reason, one first channel, one narrow MVP boundary, and written stop criteria. For many early ideas, 5-10 focused buyer conversations plus desk research is enough to choose the next test.

What if the market already has many similar AI apps?

Competitors can prove demand, but crowding raises the bar for differentiation. Look for a narrower buyer, underserved workflow, better distribution path, stronger founder fit, or a reason to stop.

What should my first AI coding prompt include after validation?

Include the buyer, painful workflow, current alternative, evidence level, MVP boundary, non-goals, first channel, and stop criteria. Ask the AI to flag missing assumptions before implementation.